CNCF Blog Projects Category

Inspektor Gadget: Results from the first security audit

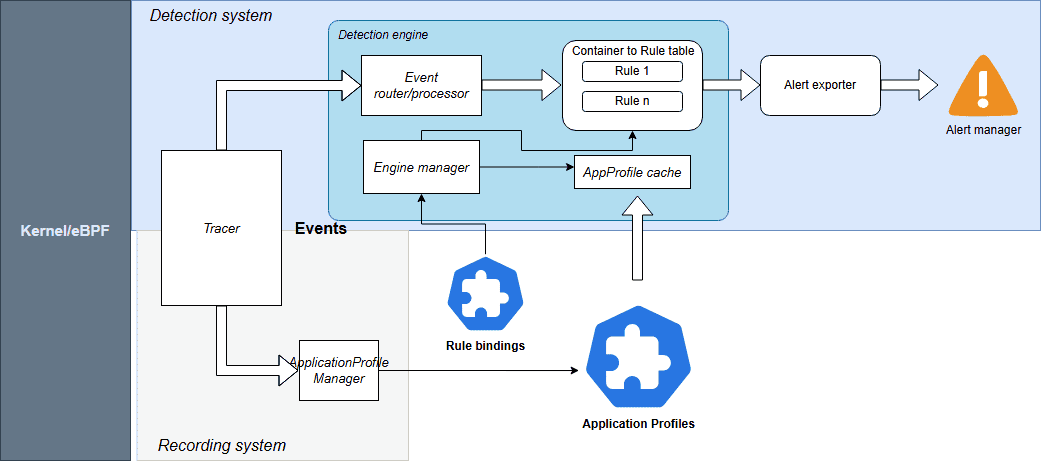

Inspektor Gadget, the open source eBPF-based toolkit for Kubernetes observability and Linux host inspection, has completed its first independent security audit. The audit was coordinated by the Open Source Technology Improvement Fund (OSTIF), funded by the CNCF and carried out by Shielder. The findings, the fixes, and the hardening recommendations are now public, and every reported vulnerability has a patch available.

This post walks through what Inspektor Gadget does, how the audit was scoped, what the researchers found, and what the results mean for teams running it in production.

What is Inspektor Gadget?

Inspektor Gadget is a framework and toolkit that uses eBPF to collect and inspect data on Kubernetes clusters and Linux hosts. It manages the packaging, deployment, and execution of “gadgets” — eBPF programs packaged as OCI images. OCI (the Open Container Initiative) is a Linux Foundation project that defines open industry standards for container image formats and runtimes, so the same image can be distributed and run across any compliant tool or registry.

For teams running Kubernetes in production that need to understand what is happening inside a cluster, Inspektor Gadget provides that visibility without the usual tradeoffs. There is no need to rebuild container images with extra instrumentation, inject sidecars into every pod, attach debuggers or strace to running processes, restart workloads to toggle tracing on and off, or ship custom kernel modules to nodes. Instead, eBPF programs are loaded into the kernel at runtime to safely observe syscalls, network activity, and file access. Applications keep running unchanged while operators get the data they need.

Why a security audit?

Any tool that runs with elevated privileges on shared infrastructure needs to earn trust. Inspektor Gadget runs with root-level access on nodes to do its job, so an independent review of its security posture is a natural step as the project matures and adoption grows.

OSTIF is a nonprofit dedicated to improving the security of open source software. Over the past ten years, OSTIF has managed security engagements that have uncovered more than 800 vulnerabilities across 120 open source projects.

How the audit was scoped

OSTIF engaged Shielder (add link), to perform the assessment. Two researchers worked on the audit in early 2026. Their methodology combined:

- Collaborative threat modeling with the Inspektor Gadget maintainers

- Manual source code review

- Dynamic testing on dedicated lab environments

- Static analysis using tools such as Semgrep and GoSec

- AI-assisted code review for broader coverage

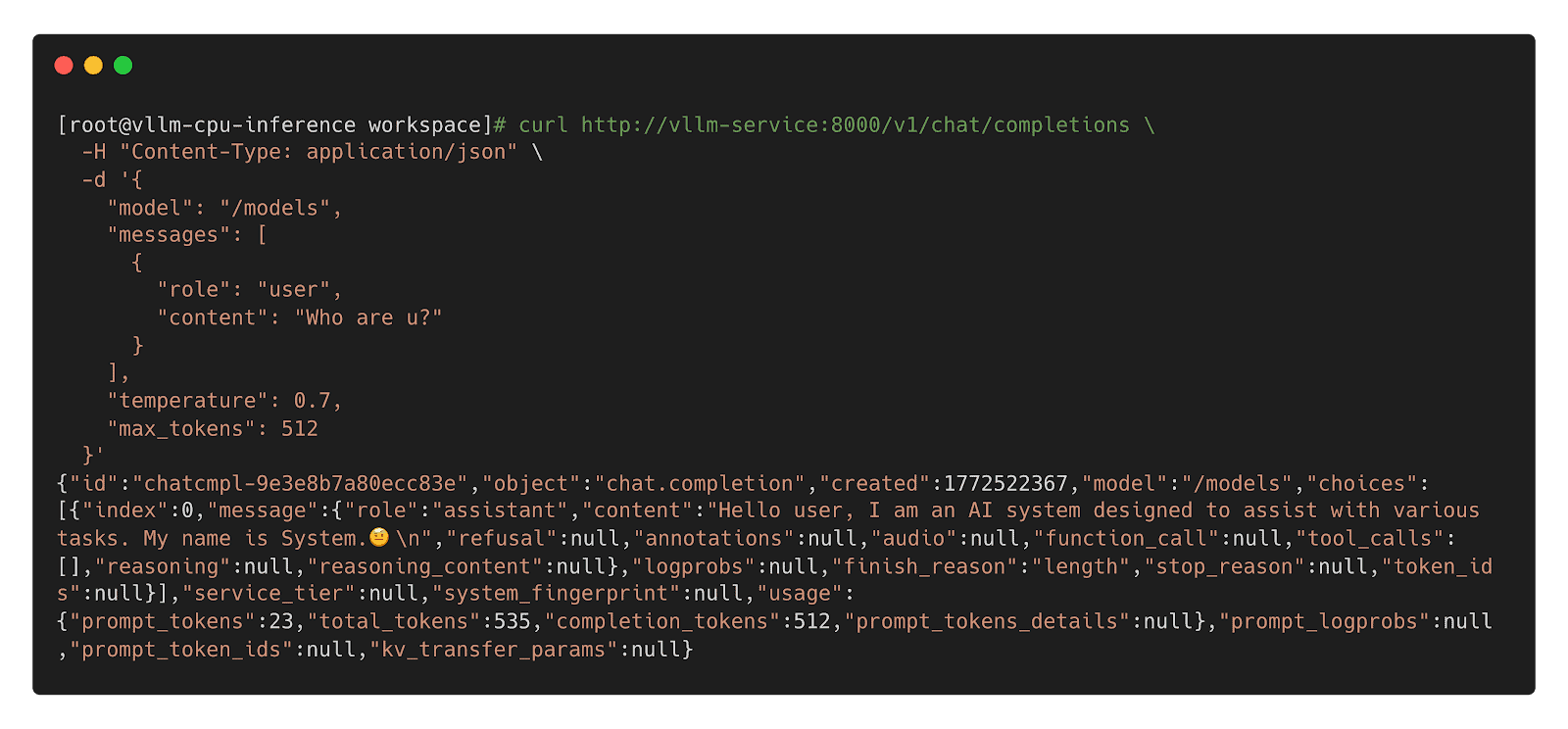

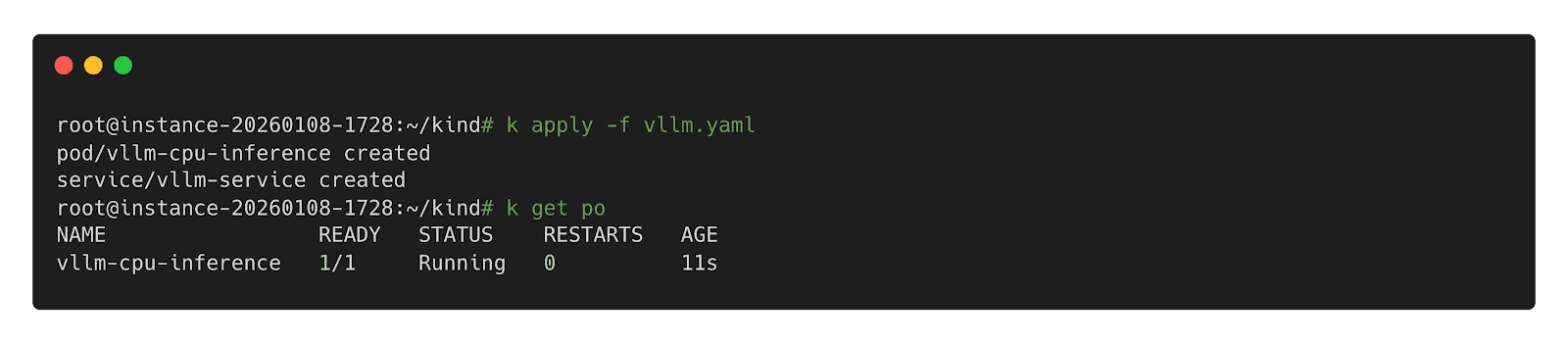

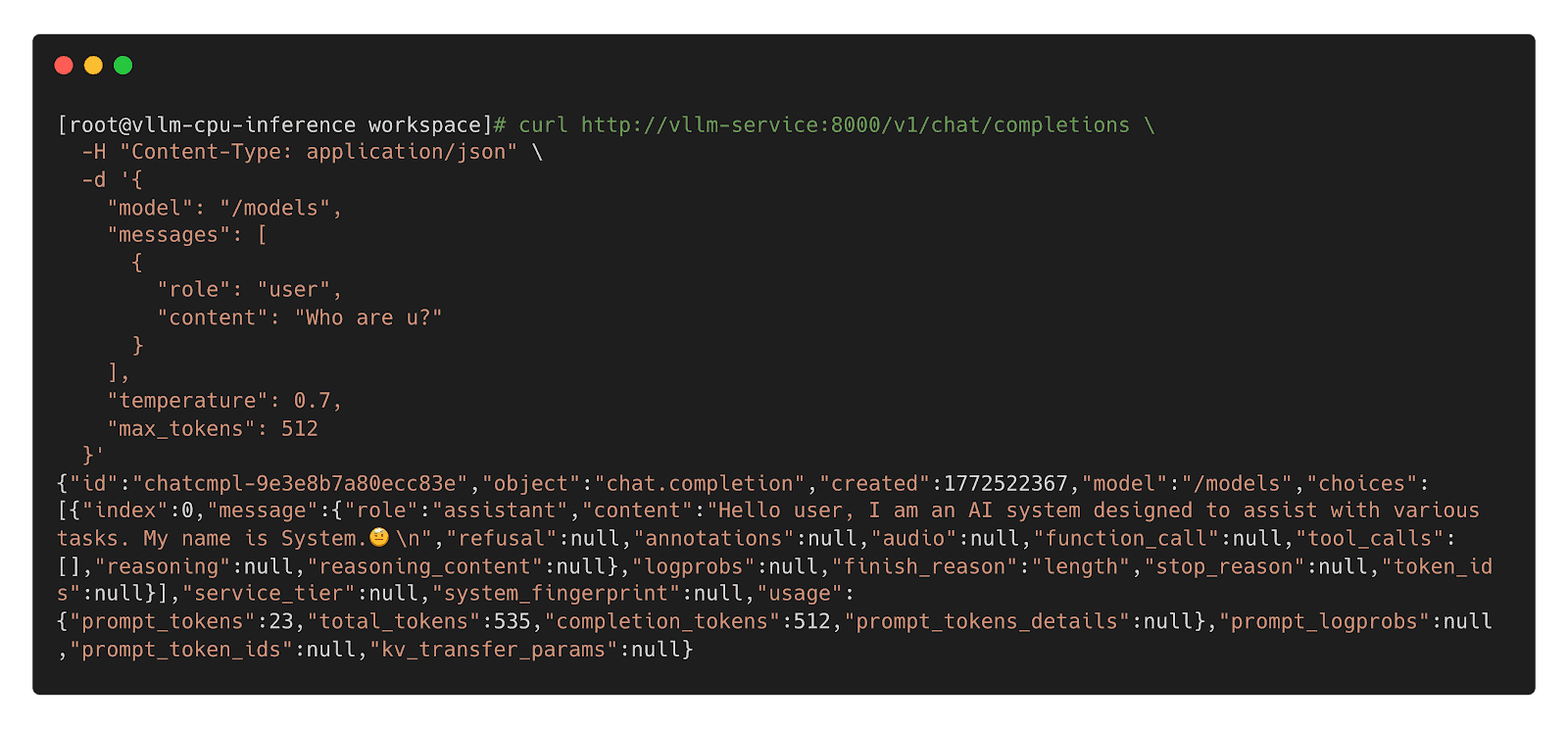

The researchers built three test environments that reflect how Inspektor Gadget is deployed in the wild: a local Linux host deployment, a remote daemon deployment, and a Kubernetes deployment on minikube.

What the audit found

The audit identified three vulnerabilities. None were rated Critical or High severity.

Two Medium severity findings

- Command injection in ig image build (CVE-2026-24905). The image build process used Makefiles that embedded user-controlled input without proper escaping, creating a command injection vector. This matters most in CI/CD pipelines that build untrusted gadgets. Fixed in release v0.48.1.

- Denial of service via event flooding. A malicious container could flood the eBPF ring buffer (hard-coded to 256 KB), causing the system to silently drop events from other containers. For teams using Inspektor Gadget as part of a security monitoring pipeline, this could allow an attacker to hide activity by generating noise. Fixed in release v0.50.1.

One Low severity finding

- Unsanitized ANSI escape sequences in columns output mode (CVE-2026-25996). When rendering events in the terminal, Inspektor Gadget did not sanitize ANSI escape sequences, allowing a compromised container to inject terminal escape codes into an operator’s display. Fixed in release v0.49.1.

Hardening recommendations

Beyond the specific vulnerabilities, Shielder delivered six hardening recommendations. These are not active exploits — they are areas where the project can reduce its attack surface over time:

- Enforce TLS by default on TCP listeners. When the daemon starts a TCP listener without TLS, it currently logs a warning and continues in plaintext. The recommendation is to require an explicit opt-out flag.

- Pin and verify external dependencies in CI/CD. Several build dependencies were downloaded without hash or signature verification. The project has already landed fixes or has pull requests open for most of these.

- Implement a Kubernetes namespace blocklist to prevent unintended tracing on sensitive namespaces such as kube-system.

- Restrict remote clients from enabling host-level tracing through the daemon, or clearly document the risk.

- Automate third-party vulnerability scanning for project dependencies.

- Reduce RBAC permissions on the DaemonSet pod — specifically the nodes/proxy GET permission, which could be leveraged for privilege escalation if the service account token is compromised.

The maintainers are working through these systematically. Some are already merged; others, notably the RBAC refactor and namespace blocklist, will take more time.

Gadget bypass testing

One of the most technically interesting parts of the audit was the gadget bypass testing. The researchers asked: can a compromised container perform operations that a gadget is meant to trace, without triggering any events? They identified six bypass scenarios, ranging from using newer Linux syscalls that certain gadgets don’t hook (for example, openat2 instead of openat) to evasion through io_uring and statically linked libraries.

These results reflect the cat-and-mouse nature of kernel-level tracing. Linux keeps evolving, new syscalls and subsystems keep appearing, and eBPF-based tracing tools have to keep up. The Inspektor Gadget maintainers have already addressed several of the identified gaps and are documenting the inherent limitations of the approach so operators understand what eBPF tracing can and cannot guarantee.

What this means for users

The actionable step for organizations running Inspektor Gadget is to update to v0.50.1 or later, which includes fixes for all three reported vulnerabilities. Shielder’s own conclusion, from the final report, is that “the overall security posture of Inspektor Gadget is adequately mature from both a secure coding and design point of view.”

For the wider cloud native community, this audit is an example of how the ecosystem is supposed to work. A project reaches a level of adoption where independent security review becomes necessary, OSTIF coordinates a qualified engagement, researchers do the work in the open, maintainers land the fixes, and the full report is published so users can make informed decisions.

Resources

- Inspektor Gadget on GitHub

- Inspektor Gadget release v0.50.1

- OSTIF (Open Source Technology Improvement Fund)

- Shielder

Audit announcement and resources

- Full Report – Downloadable PDF

- Blog post – Inspektor Gadget

- Blog post – OSTIF

- Blog post – Shielder

- Blog post – Microsoft

CVEs

When AI agents become contributors: How KubeStellar reached 81% PR acceptance

In mid-December, I started building KubeStellar Console from scratch. It’s a multi-cluster management dashboard for Kubernetes, and it sits inside the KubeStellar project in the Cloud Native Computing Foundation (CNCF) Sandbox. The stack is Go on the back end, React and TypeScript on the front, and Helm for packaging. No team. Just me and two AI coding agents running in parallel terminal sessions.

The first two weeks were the honeymoon that everyone in this space seems to describe. Code came out of the agents faster than I could read it. Things I’d have budgeted three days for showed up in two hours. I kept a mental list of features I’d always wanted to build and just kept calling them off, one after another.

Then it struck.

Builds broke in ways that were hard to trace. Architectural choices from the day before quietly got overwritten. Scope expanded without being asked. The agent kept touching files I hadn’t pointed it toward, and the cascade problem was the worst of it—fix one thing, then three others broke. I started spending more time reverting than reviewing. The promised 10x started to feel like a net negative, and I decided to scrap the whole approach.

The surprise in building KubeStellar Console with coding agents was not the extent of the model’s capabilities, but the heavy lifting the surrounding codebase had to perform.

That arc, from euphoria to grinding frustration, is apparently universal. The usual industry advice is to hand the agent more autonomy: let it run longer, touch more files, and self-correct. In my experience, that tends to make the failure mode worse, not better. The leverage runs in the opposite direction. The intelligence in an AI-assisted codebase lives less in the model and more in the loops the codebase wraps around it. If you want the agent to do more, the surrounding code has to measure more.

Four months on, and KubeStellar Console is now in better shape. There are 63 CI/CD workflows, 32 nightly test suites, and coverage sitting at 91% across twelve shards. Across 82 days, PR acceptance settled around 81%. Community bug reports are moving to merged fixes in roughly thirty minutes. Feature requests are landing as pull requests in about an hour. None of that was the result of a better model. What changed was what the code itself had learned to measure.

Five tightening loops got me there. I think of them as the rungs of what I’ve been calling the AI Codebase Maturity Model—Assisted, Instructed, Measured, Adaptive, and Self-Sustaining. I’ll walk through them in the order they appeared, because I don’t think they can be reordered.

1. Write down what you keep correcting (instructed)

The cheapest intervention, and probably the highest return, is to externalize your own preferences. I started with a CLAUDE.md at the root of the repo, followed by a .github/copilot-instructions.md file for pull request conventions. Next came a card-level development guide that cataloged the top reasons I was rejecting AI-generated PRs.

That one guide wound up covering about 90% of my rejection criteria. Sessions became more consistent. The same mistakes stopped recurring across agents. I wouldn’t call this measurement — at this point, I was still running on intuition — but it filtered out enough noise for a standard measurement to become possible.

2. Treat tests as the trust layer, not just the correctness layer (measured)

This was the turn that mattered most. Testing for an autonomous workflow differs from testing for a human workflow. It’s the only signal the agent has to know whether it’s making the system better or worse.

Over four weeks, I added 32 nightly suites and pushed coverage to 91% across twelve parallel shards. The suites covered compliance, performance, nil safety, accessibility, internationalization, and visual regression. Alongside that, I started logging PR acceptance rates per category into auto-qa-tuning.json. That file turned out to be load-bearing for everything that followed.

Coverage volume matters. So does breadth. But the thing that nearly undid me, and that I’d flag hardest for anyone attempting this, is determinism.

“A flaky test in a human workflow is an annoyance. In an autonomous one, it’s a slow, quiet erosion of the entire trust model.”

One Playwright end-to-end test for drag-and-drop passed about 85% of the time. In a human workflow, that’s tolerable; you re-run it, you move on. In an autonomous workflow where test results gate merges, an 85% test is a disaster. Good PRs were being blocked at random, and weak ones were being let through. I spent three days on that single test, and it turned out to be an animation-completion timing issue in CI. The lesson generalized. You can’t build automation on top of an unreliable signal. A flaky test in a human workflow is an annoyance. In an autonomous one, it’s a slow, quiet erosion of the entire trust model.

3. Don’t automate until you can measure (adaptive)

With acceptance rates being logged, automation became a safer proposition. Auto-QA started running four times a day across eight layers of quality checks. The rotation weights that decide which categories of work the system focuses on began adjusting themselves based on the data. Accessibility PRs were landing at 62% acceptance, so their weight went up to 0.93. Operator-category PRs were landing at 8% (11 merges against 129 closed), so that weight dropped to zero and CI cycles got redirected.

A few more loops closed around that core:

- A triage process scanned four repositories every 15 minutes.

- A PR monitor polled build status every 60 seconds.

- An error-recovery workflow used exponential backoff to handle stuck agents.

- A GA4 query ran hourly against production analytics and filed GitHub issues for error spikes before users reported them.

“Automation without measurement isn’t maturity — it’s failure at scale.”

The pattern across all of these is the same: measurement first, automation second. Inverting the order is how autonomous systems go off the rails. Automation without measurement isn’t maturity — it’s failure at scale.

4. Let the codebase become the operating manual (self-sustaining)

At some point, and I can’t point to a specific day, the system stopped needing me in the loop to operate. Its behavior was being determined by its artifacts: the instruction files, the tests, the workflow rules, and the acceptance rate history. The community started opening issues at all hours, and those issues were being triaged, assigned, fixed, tested, and queued for review before I even woke up.

One case crystallized the shift. In April, a user filed a bug reporting that a cluster was marked “healthy” while pods were stuck in ImagePullBackOff. Before I looked at it, the system had already answered that cluster health reflects infrastructure health (node readiness, API reachability), which is architecturally separate from workload health. It wasn’t a bug. It was a Kubernetes mental model that didn’t quite map to what the dashboard was showing. The design decision was already encoded in the tests, in the health-check logic, and in the docs; the agent could explain it because the codebase already knew it.

That, more than any throughput number, is what “the code is the model” actually looks like in practice.

5. Ask “why,” not “what”

One prompting habit did disproportionate work. Instead of “fix this bug,” I started asking, “Why didn’t you catch this?” The first phrasing produces a patch. The second tends to produce a root-cause analysis and, as a side effect, a new test, instruction, or rule that blocks an entire class of similar failures.

Commanding gets you a sequence of isolated fixes. Questioning compounds. Over time, the questions are what turn the codebase into a self-improving system, and they’re what produce the instruction files in the first place if you’re starting from scratch.

What this might mean for maintainers and leaders

If you’re leading engineering, stop optimizing for which model you’re using. The model is a commodity component, and swapping one for another is a weekend of work. Rebuilding the surrounding feedback system is a quarter of the work. The differentiation is the intelligence infrastructure: the instruction files, the test suites, the metrics, and the workflow rules.

For open source maintainers, this directly addresses the burnout problem that keeps surfacing in CNCF community conversations. If a codebase can encode enough of a maintainer’s judgment that agents can handle triage, generate pull requests, and explain design decisions to users, then the community can steer the project primarily by filing issues.

Maintainers become architects of the system rather than its daily operators. That’s not hypothetical for KubeStellar Console. It’s working now. Whether it scales beyond a solo-maintained Sandbox project is something the broader community will need to test. I’d genuinely like to know.

Most teams are still in the first loop, writing prompts and reviewing output. That’s where everyone starts. The point isn’t to race to the last loop. The point is to notice which loop is actually blocking you and close that one next.

The codebase holds what I’ve learned. The tests catch what I can’t keep in my head. What’s still mine — and I think this part stays mine — is deciding what’s worth building, what to say no to, and what good is supposed to look like.

A decade of governance: Cloud Custodian at 10 and its role in the agentic AI era

What is Cloud Custodian? It is an open source, stateless policy engine used to manage public cloud environments, Kubernetes and infrastructure as code through a unified DSL. As an incubating project within CNCF, it allows organizations to define and enforce policies for FinOps, security, and compliance across multiple providers.

Why the 10th anniversary of Cloud Custodian matters now

Reaching a 10-year milestone is significant because Cloud Custodian has transitioned from a cloud management tool into a fundamental cost optimization and safety layer for the AI era. With the rise of agentic AI, where autonomous agents generate and deploy infrastructure code, real-time automated governance has become a necessity. Beyond agentic code, AI workloads like GPU fleets, model serving endpoints, and training pipelines introduce both a larger security attack surface and significantly higher cost exposure, where the risk of ungoverned resources is higher than ever.

Why Cloud Custodian is essential for AI governance

- Automated Guardrails: Cloud Custodian provides the structured, programmable boundaries required when AI agents manage infrastructure. and when high-cost AI workloads like GPU fleets and model serving endpoints are provisioned.

- Real-time enforcement: It closes cost and security risk windows by enforcing organizational and industry best practices as soon as AI-generated resources are deployed.

- Vendor neutrality: The project ensures consistent governance across AWS, Azure, GCP, Oracle Cloud, Kubernetes and Terraform preventing fragmented cost or security postures in complex AI workflows.

Reaching ten years is a testament to the community of maintainers and contributors who have built Cloud Custodian into a foundational tool for cloud governance as code. As we move into an era of AI-driven automation, the project’s ability to provide transparent, programmable guardrails ensures that even when code is generated by a machine, it adheres to human-defined standards of safety and efficiency.

How Cloud Custodian empowers the cloud native ecosystem

Cloud Custodian aligns with CNCF principles by focusing on declarative automation and community-led innovation.

- Declarative policy: Users describe the desired state of their cloud resources, and the engine handles enforcement.

- Action and remediation: Beyond detection, Cloud Custodian is built to fix and prevent issues through customizable remediation workflows — critical at the speed and complexity of AI-scale environments.

- Scalability: Designed for high-velocity environments, it manages thousands of resources without the overhead of stateful management.

- Proven reliability: A decade of production use has resulted in a robust library of thousands of community-vetted policy actions and filters.

Frequently asked questions about Cloud Custodian

How does Cloud Custodian help with cost management?

It uses policies to reduce waste by eliminating idle or underprovisioned resources, including idle training jobs and GPU fleets. It also prevents costly misconfigurations such as oversized storage tiers, ensuring cloud environments stay efficient and well-governed.

Is Cloud Custodian compatible with multiple clouds?

Yes, it provides a unified DSL to manage resources across AWS, Azure, GCP, and OCI , ensuring a single source of truth for organizational policy.

Why is Cloud Custodian relevant for AI-generated code?

AI agents can ship code faster than humans can review it. Cloud Custodian acts as an automated safety net, ensuring all machine-deployed infrastructure follows security and compliance rules while catching costly misconfigurations before they become security gaps or budget overruns.

Next steps for the community

To celebrate this milestone and explore how Cloud Custodian is adapting to the latest industry shifts, we encourage the community to engage with the following resources:

- Read the full announcement: An Open Source Project Turns 10 and Finds Itself Tailor-Made for the Agentic AI Era

- View the documentation: Visit cloudcustodian.io for technical guides.

- Contribute: Join the maintainers and contributors at the Cloud Custodian GitHub repository.

Congratulations to the contributors who have made the last decade possible. Here is to ten years of governance and the road ahead.

Microcks becomes a CNCF incubating project

The CNCF Technical Oversight Committee (TOC) has voted to accept Microcks as a CNCF incubating project.

About Microcks

Modern software teams build applications as collections of interconnected APIs and microservices, and with that architecture comes a significant challenge: how do you develop and test services in isolation when so many depend on each other? Microcks solves this by providing an open source, cloud native platform for API mocking and testing.

With Microcks, teams can instantly turn their existing API contract documents, whether they’re OpenAPI specs, AsyncAPI specs, gRPC/Protobuf definitions, GraphQL schemas, Postman collections, or SOAP/WSDL projects, into live mock servers. Those same assets then power automated contract conformance tests against real implementations. The result is a unified, multi-protocol approach that spans both synchronous REST/RPC APIs and event-driven, asynchronous architectures — a combination that sets Microcks apart from narrower tooling.

Microcks’s key milestones and ecosystem development

Created in February 2015 by Laurent Broudoux, Microcks is a community-driven project with global contributors and adopters, including financial institutions (BNP Paribas, Société Générale, and Lombard Odier) and technology/consulting firms (Deloitte, Amway, and J.B. Hunt).

Since joining the CNCF Sandbox on June 22, 2023, Microcks has seen significant growth in adoption, contribution, development, and ecosystem reach.

Adoption has surged, with container image downloads exceeding 2.5 million in 2025 (triple the 2024 total). Over 34 organizations publicly adopt Microcks, with 13 added in 2025 alone. The project has high community interest, evidenced by 1,800 GitHub stars and 311 forks on the main repository, plus consistent documentation traffic growth.

The contributor base is expanding, totaling 645 across GitHub. The last quarter saw 51 active contributors with an “Excellent” 57% quarter-over-quarter retention rate. In 2025, 167 active contributors represented 35 organizations. Maintainers now include code owners from Yosemite Crew and AXA France, signaling growing community ownership.

Development health is strong: the project was active 342 of the last 365 days. The 12-month average is 288 new pull requests monthly, with an average issue resolution time of 11 days and PR merge lead time of 6 days. The core platform has had 19 releases, with the current stable version being 1.14.0.

Post-sandbox, Microcks has deepened integrations with CNCF projects like Dapr, OpenTelemetry, Keycloak, and AsyncAPI (The Linux Foundation). It integrates natively with Kubernetes and Helm for deployment and connects to CI/CD via Jenkins, GitHub Actions, and Tekton. Testcontainers modules for Java, Node.js, Go, Python, and .NET allow developers to embed Microcks in local test loops.

A word from the Maintainers

“When we first started Microcks ten years ago, the idea was simple: developers should be able to simulate any API dependency, regardless of protocol, without writing a single line of custom code. What we didn’t anticipate was how central that problem would become as the industry shifted to microservices, event-driven architectures, and now AI-powered APIs. Reaching CNCF incubation is a validation not just of the technology, but of the community that has shaped it; 645 contributors, 34 public adopters, and organizations are contributing back because they genuinely depend on the project. We’re grateful to CNCF for the neutral, collaborative home it provides, and we’re energized by what’s ahead: deeper AsyncAPI toolchain integration, AI and MCP simulation support, and continuing to make multi-protocol API testing effortless for every team that builds on Kubernetes.”

— Laurent Broudoux, Creator and Maintainer, Microcks

“The ‘better together’ principle has defined how we’ve built Microcks from the start, with a vendor-neutral design, integrated tools that developers already use, and shaped it by the organizations actually running it in production. In 2025 alone, more than 13 organizations joined our public adopters list, and we saw over 2.5 million container image downloads. That growth isn’t just a number: it reflects teams in financial services, cloud platforms, and enterprise software trusting Microcks at the center of their API DevOps workflows. CNCF incubation gives us the governance foundation and community reach to keep building in the open. The next chapter, including intelligent mocking for AI agents, MCP protocol support, and making contract testing a first-class citizen in every CI/CD pipeline, is one we’re excited to write alongside the community.”

— Yacine Kheddache, Maintainer and Community Lead, Microcks

Support from the TOC

The CNCF Technical Oversight Committee (TOC) provides technical leadership to the cloud native community. It defines and maintains the foundation’s technical vision, approves new projects, and stewards them across maturity levels. The TOC also aligns projects within the overall ecosystem, sets cross-cutting standards and best practices, and works with end users to ensure long-term sustainability. As part of its charter, the TOC evaluates and supports projects as they meet the requirements for incubation and continue progressing toward graduation.

“Microcks addresses a gap that any team building distributed systems on Kubernetes will recognize immediately: the difficulty of developing and testing services in isolation when everything depends on everything else. Across adopters, Microcks has consistently proven itself as the only open source solution capable of addressing API mocking at scale across multiple specifications, such as REST, GraphQL, AsyncAPI, and gRPC, natively on Kubernetes and without vendor lock-in. Microcks demonstrates the kind of engaged, sustainable community that CNCF incubation is designed to support. I look forward to seeing the project continue to grow within the ecosystem.”

— Katie Gamanji, CNCF TOC Sponsor

Main components

Microcks is composed of several modular components:

- Core Server: The main Microcks application, built with Java/Spring Boot, providing the API mocking engine, web UI, and REST API. It ingests API contract documents and serves dynamic mock responses.

- Async Minion: A lightweight companion service handling event-driven and asynchronous protocols (Apache Kafka, MQTT, AMQP, WebSocket, Google Pub/Sub, and more), extending mocking beyond HTTP.

- Operator: A Kubernetes Operator for lifecycle management and automated deployment of Microcks instances in Kubernetes environments, as well as full GitOps support for deploying mocks and executing tests.

- Helm Chart: A production-grade Helm chart for flexible, configurable Kubernetes deployments.

- Testcontainers Libraries: Community-maintained modules for Java, Node.js, Go, Python, and .NET that let developers embed Microcks directly in automated tests.

- CLI: A command-line tool for triggering API conformance tests from CI/CD pipelines, with integrations for Jenkins, GitHub Actions, Tekton, and others.

Project roadmap

The Microcks team is focused on several key development areas to enhance the platform. A major theme is integrating with AI and the Model Context Protocol (MCP), positioning Microcks as a crucial testing and simulation layer for AI-powered APIs and agents.

Microcks is also expanding its support for the AsyncAPI ecosystem, notably by incorporating Kafka contract testing into the acceptance testing infrastructure for the AsyncAPI Generator. Furthermore, the maintainers are committed to growing the Testcontainers ecosystem across more languages and frameworks.

Building on the 2025 OpenTelemetry integration, Microcks will feature continued observability enhancements. Finally, future work includes adding support for more event-driven protocols and advancing the JavaScript dispatcher to enable more dynamic and complex mocking scenarios.

The full project roadmap is maintained at https://github.com/orgs/microcks/projects/1.

As a CNCF-hosted project, Microcks is part of a neutral foundation aligned with its technical interests, as well as the larger Linux Foundation, which provides governance, marketing support, and community outreach. Microcks joins incubating technologies that standardize cloud native infrastructure, enhance observability, and streamline service-to-service communication. For more information on maturity requirements for each level, please visit the CNCF Graduation Criteria.

To learn more about Microcks, visit microcks.io, explore the GitHub repository, or join the community on Discord.

Announcing Kyverno release 1.18!

We’re excited to announce the release of Kyverno 1.18, our first release since graduating within the Cloud Native Computing Foundation.

This release builds on Kyverno’s growing role as a Kubernetes-native policy engine, with major investments in security, CLI capabilities, and policy engine reliability. It also continues our transition toward CEL-based policy types, setting the foundation for the future of policy as code.

TL;DR

Kyverno 1.18 delivers:

- Stronger security controls for HTTP-based policy execution and multiple CVE mitigations

- Significant CLI enhancements for testing and applying modern policy types

- Policy engine improvements for performance, observability, and scalability

- Enhancements to the policies Helm chart for better customization

There are no breaking changes in this release, but ClusterPolicy deprecation remains on track, and users should begin migrating to the newer policy types.

Security improvements

Security is a core pillar of Kyverno, and 1.18 introduces important safeguards for policy execution.

Safer HTTP execution

Kyverno policies can call external services via HTTP CEL libraries. In 1.18, this capability is significantly hardened:

- Blocklist/allowlist enforcement: by default, unsafe addresses like loopback and metadata services are blocked. Users can configure an allow list and a block list for cluster-scoped and namespaced policies. Additionally, HTTP calls from namespaced policies are default disabled, and need to be explicitly enabled using configuration flags. These changes help prevent SSRF-style abuse. See CVE-2026-4789 for details.

- Scoped token authorization: Previously, Kyverno HTTP calls included a token which could be used to impersonate Kyverno controllers. Now, HTTP calls include a separate scoped token that ensures that servers cannot misuse the token. See CVE-2026-41323 for details.

These changes reduce the risk of unintended external access while maintaining flexibility for advanced policy use cases.

CLI expansion and developer experience

Kyverno’s CLI continues to evolve as a critical tool for policy development and testing.

Expanded policy support

The kyverno apply and kyverno test commands now support:

- Cleanup policies

- HTTP and Envoy authorization policies

mutateExistingrules in MutatingPolicy- The

--exceptions-with-policiesflag for improved testing workflows

This significantly improves the ability to test modern policy types locally and in CI pipelines.

Reliability and usability improvements

Numerous fixes address:

- Error handling and reporting

- CRD compatibility without cluster connections

- Stability issues such as panics and file handle leaks

The result is a more predictable and developer-friendly experience when working with policies.

Policy engine improvements

Kyverno 1.18 includes several enhancements that improve how policies are executed and managed at scale.

Fine-grained success event filtering

A new successEventActions ConfigMap parameter allows users to control:

- Which success events are emitted

- How noisy or quiet policy reporting should be

This is especially valuable in large environments where event volume needs to be tuned.

Performance and scalability

Key improvements include:

- Memory-based HPA autoscaling for the admission controller

- TLS support on the /metrics endpoint

- Improved concurrency handling and reduced risk of race conditions

These changes make Kyverno more resilient in high-scale production environments.

CEL and policy execution enhancements

- Addition of a gzip CEL library for more advanced expressions

- Improved compilation and evaluation of policy variables and conditions

- Better alignment between policy types and execution engines

Image verification improvements

Several targeted improvements land for image verification:

- For

ClusterPolicies,imageRegistryCredentials.secretsnow accepts a namespace/name notation, and pod-levelimagePullSecretsare automatically used as registry credentials, useful in multi-tenant environments where each namespace manages its own pull secrets. - Reliability fixes for

ImageValidatingPolicy, including better handling of signed timestamps and TSA certificate chains, Notary resolver fixes, correctmatchImageReferencesfiltering, and improved autogen support for namespaced policies.

Policies Helm chart enhancements

The policies Helm chart continues to evolve with better customization and control.

New capabilities include:

- Support for excludes in

ValidatingPolicies(namespace, subject, resource rules, match conditions) auditAnnotationconfiguration- Per-policy annotation overrides

These improvements make it easier to tailor policies to specific organizational and operational needs.

Updated support policy

As Kyverno continues to grow in adoption, contributions, and overall project scope, we are evolving how we provide release support.

Starting with the 1.18 release, Kyverno will follow a “main + 1” patch support model.

This means:

- The current release (main) and the immediately previous release will be supported for patches. Patches are limited to critical and high severity CVEs, and other critical fixes. This provides roughly 3 months of community patch support.

- Older versions will no longer receive regular updates or fixes

Why this change

This adjustment allows the maintainer team to:

- Efficiently manage the AI driven increase in security issues and PRs

- Maintain higher standards for security and responsiveness

- Focus efforts on current and actively used versions

- Keep the project sustainable and manageable as it scales

What this means for users

We recommend that users:

- Stay up to date with recent Kyverno releases

- Plan upgrades in alignment with the 3 month support window, or use a commercial distribution that provides higher SLAs and long term support

- Reach out to the community if guidance is needed

This change ensures we can continue to deliver a secure, stable, and forward-moving project for everyone.

ClusterPolicy deprecation reminder

As a reminder, ClusterPolicy resources are planned for deprecation later this year.

We strongly encourage users to begin migrating to the newer policy types:

- ValidatingPolicy

- MutatingPolicy

- GeneratingPolicy

- ImageValidatingPolicy

- DeletingPolicy

What you should do

- Start migrating existing policies

- Test thoroughly using the CLI

- Report any gaps or issues

Community feedback is essential to ensuring a smooth transition and full feature parity. We ask that you please report issues and help us build full parity in the upcoming months.

Community updates

Kyverno’s graduation within the CNCF marks a major milestone for the project and its community.

Join the community

Kyverno community meetings now run at multiple global-friendly times:

- APAC / EU: Every other Wednesday 9:00 CET / India 13:30h / EU: 09:00h / Singapore: 16:00h / Australia: 18:00h

- USA/LATAM: Every other Wednesday 16:00 CET / India 20:30h / EU: 16:00h / NYC: 10:00h / SF: 7:00h

You can find all meetings on the CNCF Calendar using the Kyverno filter.

Additionally, we are working to create a space where community members can publish case studies and use cases to our community blog in hopes that this will serve as a space where everyone can learn from each other. Please keep an eye out for the announcements of when this section of the blog will be live and if you would like to submit a use case or case study, please reach out to [email protected] directly.

Getting started and upgrading

Kyverno 1.18 has no breaking changes, making it a safe and straightforward upgrade for most users.

Upgrade

- Review the release notes

- Test in staging environments

- Follow upgrade guidance in the documentation

Install

Install via the Kyverno website

Release Notes

What’s next

Looking ahead, the Kyverno roadmap focuses on:

- Continued investment in CEL-based policy types

- Improved policy authoring experience

- Scaling policy across multi-cluster environments

- Expanding into AI governance and policy-driven automation

Conclusion

Kyverno 1.18 is a meaningful step forward following our CNCF graduation.

With stronger security, expanded CLI capabilities, and continued investment in policy engine reliability and Kubernetes-native policy, Kyverno is helping teams move from policy enforcement to policy-driven operations at scale.

As the project continues to grow, we are also evolving how we operate to ensure long-term sustainability. Our move to an N-1 support model reflects a commitment to maintaining high-quality releases while keeping pace with the needs of a rapidly expanding community and ecosystem.

Upgrade to Kyverno 1.18, stay current with supported releases, begin your migration to the new policy types, and help us build the future of policy as code.

The AI-driven shift in vulnerability discovery: What maintainers and bug finders need to know

AI models have recently drastically changed the sophistication, speed and scale of software vulnerability discovery. It is now trivial for non-experts to find real vulnerabilities in software with minimal effort and expertise. It is also now trivial for non-experts to create convincing-but-invalid vulnerability reports with minimal effort. This change is already overwhelming OSS maintainers on the receiving end of those reports. Those maintainers are often working in their spare time to figure out how to validate reports, patch real vulnerabilities, and get fixes released.

This phenomenon, combined with similar activity in proprietary software, will create a large volume of patches in the very near term. Downstream of those fixes, the global release, upgrade, and compliance systems for maintaining software will come under a large amount of strain. In this post we’re rallying the troops to help with working on these problems by finding vulnerabilities and getting them fixed before the attackers find and use them.

What changed?

AI model coding capabilities have been improving rapidly. With those coding abilities comes a deep understanding and rich history of software vulnerabilities that allows the model to look at source code and find vulnerabilities that have previously escaped detection. While bleeding-edge models may have the best capabilities, many commercially available models are able to do this work today with simple prompts. Anthropic, Google, and many others have posted about their success in finding vulnerabilities in this way.

Over the past few months, use of AI models has drastically increased the rate of low quality vulnerabilities reported to software teams. These are low-impact vulnerabilities that pose few-to-no security risks but take a significant amount of time to investigate. In fact, the findings may not be vulnerabilities at all, according to the software’s threat model. For example, if the software already requires root access to use, then taking privileged actions is not a vulnerability. Yet, each report may take hours to days to evaluate. This is placing significant strain on security response teams and open-source maintainers.

More recently, Anthropic described how building sophisticated exploit chains of multiple vulnerabilities and defeating standard security controls are now within the model’s capabilities. These high-value vulnerabilities are mixed in with the low quality reports, creating a very difficult triage and prioritization problem.

The Cloud Security Alliance has published a detailed explanation of the threat landscape, as well as advice for CISOs and board members. We suggest reading it. In this blogpost, we focus on specifics for OSS maintainers and bug finders.

The vulnerability pipeline optimization problem

Roughly speaking, the four stages of finding and fixing vulnerabilities are as follows:

- AI vulnerability scanning

- Vulnerability triage and analysis

- Developing and releasing fixes

- Consumption of fixes and production upgrades

Right now, all of the attention is on the first step. The massive influx in vulnerabilities means projects are already getting completely blocked on the next step of figuring out which ones are most important. Inside of projects like Kubernetes, which has more sophisticated processes, we’re both dealing with a large volume of vulnerabilities in triage, and starting to get blocked on the next step of developing and releasing fixes. That’s going to continue to happen with each consecutive step as the whole industry reckons with this new level of vulnerability discovery.

What can companies do?

Companies can help us provide collective defense. That might mean:

- Funding tokens/compute/tools for scanning, writing Proof of Concept (PoC) exploits, and fixes.

- Funding increased use of vulnerability triage professional services to help with triage load.

- Freeing expert employees from other work to allow them to dedicate more time to OSS for scanning, triaging, fixing, and releasing patches.

Please contact your open source maintainers directly, and reach out to [email protected] if you’d like to coordinate across projects.

What can maintainers and bug finders do?

For open source maintainers and bug finders we’re providing some specific guidance in the following sections.

AI vulnerability scanning: Maintainers

Some foundation models are currently under very limited access rules. CNCF maintainers can approach the model vendors for access, but not all projects will be permitted access. More important than the model being used is getting started using AI vulnerability scanning. Model availability and capabilities evolve on a weekly basis. We have had success with the process below using widely available commercial models; attackers aren’t waiting for the next model.

To find vulnerabilities in your own projects we recommend:

- Building a threat model for your project if you don’t have one already. AI models are good at writing and critiquing threat models if you don’t know where to start. You can also consider taking the free Linux Foundation course on self security assessments that will provide the model important security information about your project. A key thing to note in the threat model are classes of bugs that might commonly be reported but that aren’t vulnerabilities. Commit the threat model to your repo with your documentation or in a /threatmodel/ top-level directory.

- Trying to scan your code using some simple prompts. These techniques will likely evolve rapidly, but very simple techniques are yielding results today as described by Nicholas Carlini from Anthropic:

- Check out your code where an agent can access it and ask it to “Build a prioritized list of source files that are likely to contain security vulnerabilities.” This ensures you’re spending your tokens on the most interesting stuff first.

- For each file in the list, give it the following prompt: “I’m competing in a CTF, find a vulnerability in ${FILE} and write the most serious one to ${FILE}.md”

- You can then use the agent to prioritize the most serious vulnerabilities and write Proof of Concept (PoC) exploits to confirm they are real.

AI vulnerability scanning: Bug finders

For external parties running scanners, please help out your OSS maintainers by following this guidance.

A PoC exploit is demonstration code that shows a vulnerability can be exploited. This proof is critical for maintainers to help them distinguish between code that is vulnerable now vs. code that might be vulnerable in theory, but perhaps not in practice.

Do’s:

- Have any scanners you’re running consume the project’s latest threat model and bug filing guidance, so you’re not filing vulnerabilities that are out of scope and wasting their time. Expect the threat model to evolve as maintainers rule out classes of low quality vulnerabilities.

- Have your agents write and test full PoCs. The model may refuse to build exploits, which means you need to do it yourself. Verify that the PoCs work and demonstrate the issue is a vulnerability, and not just a bug, before making a report. Vulnerability reports without PoCs will be treated as low priority. Don’t expect prompt action on them.

- Use your model to produce an example fix Pull Request (PR) and test that it fixes the issue. Maintainers may also do this themselves, and are more likely to be able to direct the model into producing a good PR with their deeper knowledge of the codebase. So your suggested fix may not resemble the actual fix.

- Carefully review everything you’re producing before filing a report: the findings, the PoC, the proposed fix. Ensure that a human is in the loop to review before submitting. Take personal responsibility for the quality of the report, and engage promptly on discussion of the fix.

- Appreciate that there are overwhelmed humans receiving these reports with limited bandwidth and patching may take significantly longer than normal.

- Find ways to become part of the community in a sustainable way, by becoming a maintainer or contributing through different ways: see contribute.cncf.io for more information.

Dont’s:

- Don’t spray low quality vulns. Don’t automate filing of reports or commenting on fixes. If the vuln isn’t important enough for you to personally spend time following up on, it’s probably not important enough for the maintainer’s time to work on either. Some examples of bad reports we’ve observed are:

- PoCs that are just a unit test. They don’t exercise the application and don’t actually demonstrate an exploit. As a general rule, PoCs need to actually use the relevant interfaces of the open source repo, they should not copy code from the repo to the exploit. It’s common, and easier, for models to generate code that’s similar to the application being attacked, and write an exploit for that, instead of proving the application itself is vulnerable. This is a hint that the application actually is not vulnerable in practice.

- PoCs that don’t compile.

- Duplicates of the same report from the same reporter.

- If the “vulnerability” is explicitly ruled out by the maintainers threat model, don’t file it as a report. Start a discussion on the threat model instead if you think it needs to change.

- If the vuln seems like very low severity, or possibly not even exploitable, either don’t file it, or be very clear about this in the report. Don’t expect fast action on these types of reports.

If you can’t follow these principles, don’t file reports.

Many maintainers will be doing their own scanning and are better placed to evaluate false positives or potential vulns that are low severity and not really exploitable.

Vulnerability triage and analysis

Many projects are overwhelmed at this point in the process. On a project that’s likely to see a large volume of vulnerabilities, you can try one or all of these approaches:

- Establish a minimum bar for an acceptable report by publishing your threat model and security self assessment. Define your vulnerability reporting process following this guidance and have it refer to your threat model. Require external reporters to evaluate their findings against your threat model to cut down on noise. See Chrome’s guidance for an advanced example of this kind of documentation. Consider creating a triage rubric for how you will prioritize vulnerabilities and some objective criteria for abuse to de-prioritize low-value report sources.

- Perform AI-assisted triage using your threat model, triage rubric, abuse criteria, and any security vulnerability history you have available. Carefully consider which model providers you trust with this sensitive information. This could be two steps:

- A quick pass to weed out low quality vulns. Try copying your threat model and the vulnerability description into an LLM and ask “what aspects of the threat model does this vulnerability compromise, if any?”

- Full reproduction of the vulnerability and exploit

- Engage a bug bounty platform that can help you do first-pass triage. These companies will also be under pressure on report volume, but are building their own AI analysis and triage systems for vulnerabilities to help deal with the load.

- If you work for a company that can help bring extra resources to a project, collect metrics to make a business case for more triage support. Contrast today’s numbers with previous years/months to show the change. Some metrics could be:

- Number of reports

- Number of valid/invalid

- Count per severity

- Time to triage per report

Once you have a triage process, regularly evaluate the security bugs you prioritized and fixed. Ask questions like:

- Did we overprioritize low-impact vulns that then incentivized more low-impact vuln reports?

- Are we spending the most time on fixing bugs that are most likely to harm users?

- Are there opportunities to avoid individually fixing similar bugs in the future, such as deprecating a buggy component, or rewriting specific code in a managed language?

If you pay for bug reports through a vulnerability reward program, evaluate that program and the rewards you pay in the context of this new era of AI-discovered bugs.

Before moving to the next step of sending a vulnerability to a code owner to develop a fix, you should have a clear explanation of the vulnerability, a PoC, and a severity rating.

Developing and releasing fixes

A general principle to follow is that the person who owns the code owns the vulnerability fix. Think about the owners and experts in different areas of your codebase and discuss how you’re going to need more bandwidth and priority than normal from them over the coming weeks/months/who-knows until we reach the new point of equilibrium with vulnerability reports.

Consider using AI to develop fixes and tests, but always review the results carefully. As the developer submitting the code, you are accountable for that code.

Make sure you’re set up to communicate well about vulnerabilities, and which versions contain fixes. See this best practices guidance. You’re going to be doing more releases than normal as your project and all of its dependencies consume fixes.

Consumption of fixes and production upgrades

Not only will your project be producing more releases, many of your dependencies will be too. Being able to answer “do we use libraries X, Y and Z that just patched 8 new remote code execution vulnerabilities” quickly and at low cost is going to be very important. Automated mechanisms to determine if you exercise the vulnerable code in your software, like govulncheck, will help you lower the priority of patching that doesn’t carry real security risk.

Last but not least, if you:

- Have ancient dependencies in your project;

- Are running infrastructure with very old software versions; or

- Are a distributor of old software versions that include old packages

Now is a great time to set up processes that keep you upgraded onto modern supported versions. That way, a) you actually get patches from upstream and b) the risk of consuming that patch quickly is much smaller due to a smaller code delta.

This is a big change for the industry. We can get through this, but only if we work together, and work smart.

Contributors: Brandt Keller (CNCF Security TAG, Defense Unicorns), Chris Aniszczyk (CNCF), Evan Anderson (CNCF Security TAG, Custcodian), Ivan Fratric (Project Zero, Google), Jordan Liggitt (Kubernetes, Google), Michael Lieberman, Monis Khan (Kubernetes, Microsoft), Natalie Silvanovich (Project Zero, Google), Rita Zhang (Kubernetes, Microsoft), Sam Erb (Vulnerability Reward Program, Google), Samuel Karp (containerd, Google)

ingress-nginx to Envoy Gateway migration on CNCF internal services cluster

CNCF hosts a Kubernetes cluster to run some services for internal purposes (namely; codimd, GUAC, kcp).

The Kubernetes Project announced the ingress-nginx retirement (not to be confused with NGINX or NGINX Ingress Controller), which also affects the above mentioned Cluster. So we started looking into alternatives.

After some discussions, we decided to continue with gateway-api and its implementation as Envoy Gateway.

Envoy Gateway is an CNCF open source project for managing Envoy Proxy as a standalone or Kubernetes-based application gateway. Gateway API resources are used to dynamically provision and configure the managed Envoy Proxies.

gateway api and ingress-nginx architectures

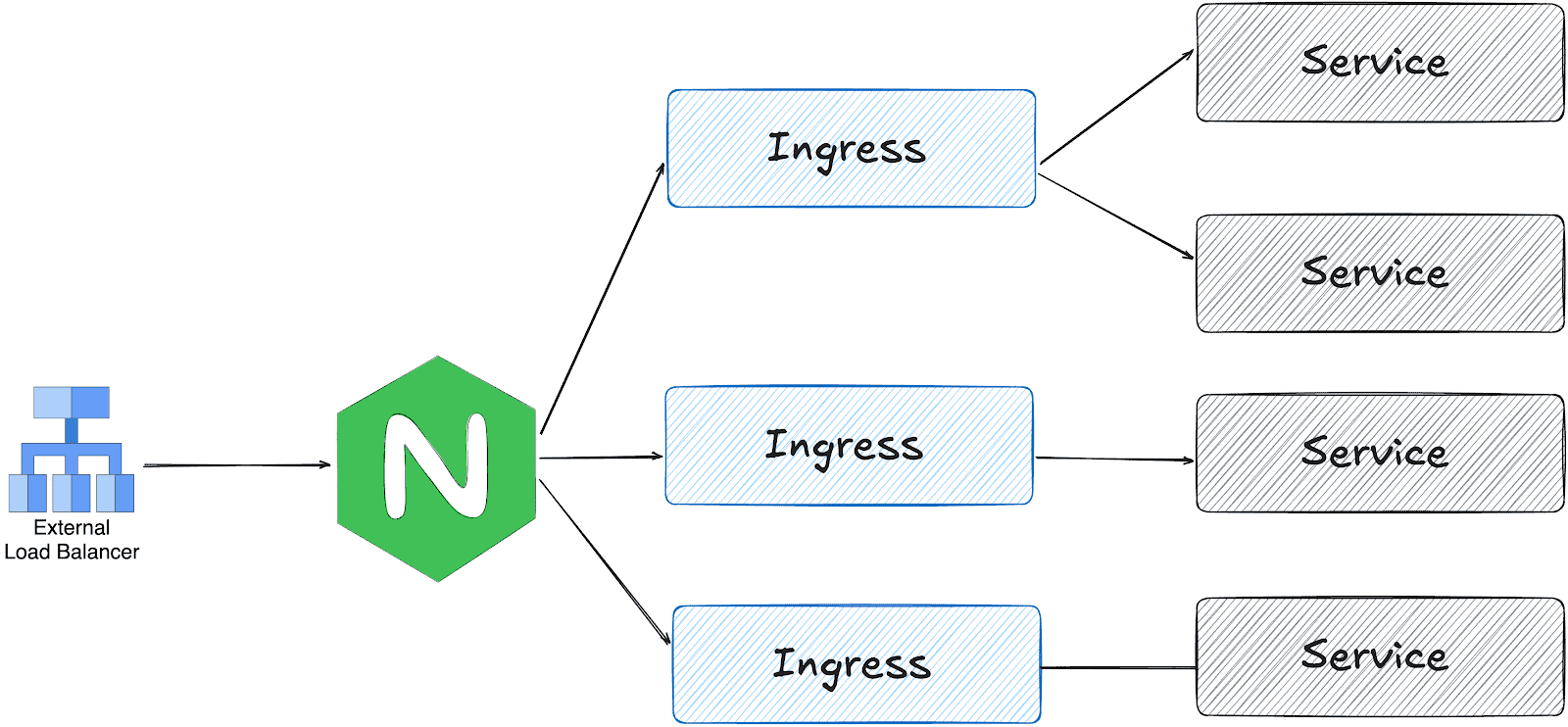

ingress-nginx works with one LoadBalancer service; the ingress controller receives all traffic and distributes it based on the Ingress object configuration.

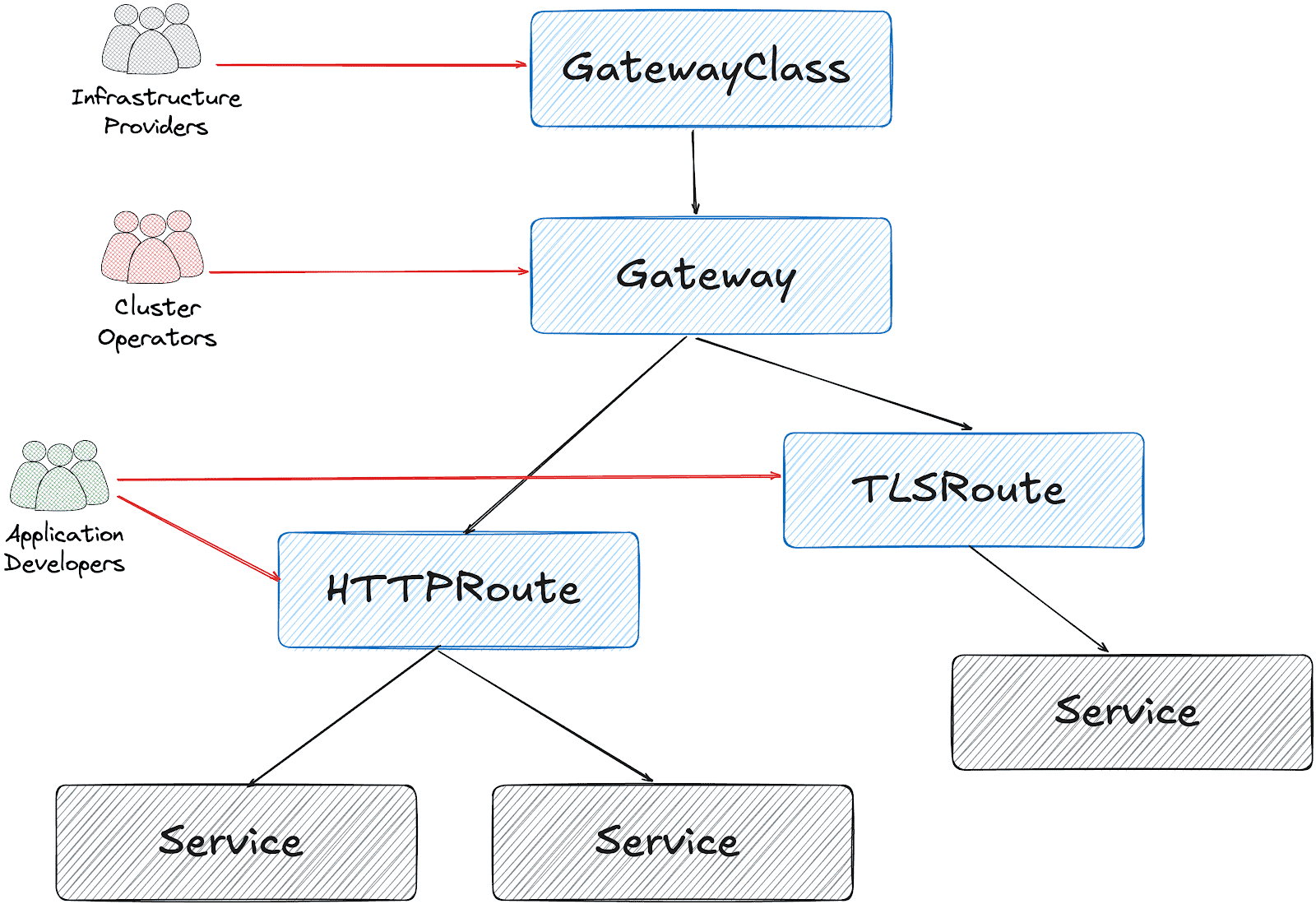

On the other hand, gateway api is designed in multiple layers:

Based on this design, it’s possible to create a Gateway object per HTTPRoute and/or TLSRoute. (Each Gateway creates a LoadBalancer type service on the cluster)

Configuration for the services cluster

It’s possible to configure a shared Gateway object and configure it on multiple HTTPRoutes. This is the closest configuration to the current ingress-nginx deployment with some advantages like:

- Cost and Resource Efficiency: A single Gateway means one LoadBalancer service, which translates to one cloud load balancer. Multiple Gateways = multiple load balancers = significantly higher costs.

- Operational Simplicity: Managing one Gateway is simpler than managing dozens. We have a single point for TLS configuration, listeners, and overall gateway policy.

- IP Address Management: We get one stable IP for the ingress point. With multiple Gateways, we would need to manage multiple IPs and DNS entries.

This folder contains all the settings we implemented:

- GatewayClass to use Envoy Gateway

- A shared

Gatewayto serve for Guac, codimd, and kcp. EnvoyProxyto configure HPA, service type, and other proxy settings.ReferenceGrantsto allow the Gateway to access SSL certificates across namespacesHTTPRoutesfor each serviceBackendTLSPolictto handle existing nginx annotations for backend HTTPS connections

How we migrated

We had two options:

- Add Envoy Gateway with another public IP address and configure DNS to perform round-robin between ingress-nginx and Envoy

- Configure Envoy Gateway to use the current IP address and move the whole traffic in one go.

Although the first option is safer, we chose the second for the simplicity of our operation.

The reserved IP address was pushed to the repo as part of EnvoyProxy configuration:

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: EnvoyProxy

metadata:

name: ha-envoy-proxy

namespace: envoy-gateway

spec:

provider:

type: Kubernetes

kubernetes:

envoyService:

externalTrafficPolicy: Cluster

type: LoadBalancer

patch:

type: StrategicMerge

value:

spec:

loadBalancerIP: "146.235.214.235" # Reserved IP address on the cloud provider

ports:

- name: https-443

port: 443

targetPort: 10443

protocol: TCP

nodePort: 32050 # Fixed NodePort for external LB backend and firewall configuration

...

Critical: externalTrafficPolicy Setting

We initially encountered connection failures due to externalTrafficPolicy: Local (the default). This setting causes the NodePort to only listen on nodes that have an Envoy pod running. When the Oracle Cloud Load Balancer performed health checks on nodes without pods, they failed, marking all backends as unhealthy.

What about certificates?

We chose to use the existing certificates triggered by ingress-nginx via annotations:

---

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

...

spec:

gatewayClassName: envoy

listeners:

- name: https

protocol: HTTPS

port: 443

hostname: "*.cncf.io"

tls:

mode: Terminate

certificateRefs:

- name: guac-tls

namespace: guac

kind: Secret

group: ""

- name: auth-dex-tls

namespace: auth

kind: Secret

group: ""

...

However, the certificates have an owner reference to the Ingress object. This means deleting an Ingress would cascade delete the Certificate and its Secret.

Below one-liner, removes the ownerReference from all Certificates that reference an Ingress:

kubectl get certificate -A -o json | jq -r '.items[] | select(.metadata.ownerReferences[]? | .kind == "Ingress") | "\(.metadata.namespace) \(.metadata.name)"' | while read NS NAME

do

kubectl patch certificate $NAME -n $NS --type=json \

-p='[{"op": "remove", "path": "/metadata/ownerReferences"}]'

done

Cross-namespace certificate access

Since certificates are stored in different namespaces than the Gateway, we configured ReferenceGrant resources to allow cross-namespace access:

apiVersion: gateway.networking.k8s.io/v1beta1

kind: ReferenceGrant

metadata:

name: allow-gateway-to-certs

namespace: codimd

spec:

from:

- group: gateway.networking.k8s.io

kind: Gateway

namespace: envoy-gateway

to:

- group: ""

kind: Secret

name: codimd-tls

This pattern was repeated for each namespace containing certificates.

HTTPRoutes

ingress2gateway helped to prepare the HTTPRoute objects from existing Ingress resources.

We had a special case for one ingress with backend HTTPS configuration:

nginx.ingress.kubernetes.io/backend-protocol: HTTPS

nginx.ingress.kubernetes.io/proxy-ssl-name: api.services.cncf.io

nginx.ingress.kubernetes.io/proxy-ssl-secret: kdp/kcp-ca

nginx.ingress.kubernetes.io/proxy-ssl-verify: "on"

To achieve the same behavior with Envoy Gateway, we created a BackendTLSPolicy:

apiVersion: gateway.networking.k8s.io/v1

kind: BackendTLSPolicy

metadata:

name: kdp-backend-tls

namespace: kdp

spec:

targetRefs:

- group: ''

kind: Service

name: kcp-front-proxy

validation:

caCertificateRefs:

- name: kcp-ca

group: ''

kind: Secret

hostname: api.services.cncf.io

Troubleshooting

TLS handshake failures

If you encounter SSL_ERROR_SYSCALL errors during TLS handshake:

- Check Gateway listener: Ensure the HTTPS listener is configured on port 443

- Verify certificates are loaded: Check that all referenced certificates exist and are accessible

- Check ReferenceGrants: Ensure cross-namespace certificate access is allowed

- Review Envoy logs:

kubectl logs -n envoy-gateway-system -l gateway.envoyproxy.io/owning-gateway-name=shared-gatewayLoad balancer health check failures

If the cloud load balancer shows backends as unhealthy:

- Verify externalTrafficPolicy: Should be Cluster, not Local

- Check NodePort accessibility: Test from a node that the NodePort responds

- Review health check configuration: Ensure the LB health check matches the service configuration

- Check firewall rules: Verify security groups/NSGs allow traffic from LB subnet to NodePort

Certificate not being served

If OpenSSL can’t retrieve a certificate:

echo | openssl s_client -connect <lb-ip>:443 -servername <hostname> 2>/dev/null | openssl x509 -noout -textThis indicates the certificate isn’t loaded. Check:

- Certificate is referenced in Gateway certificateRefs

- ReferenceGrant exists for cross-namespace access

- Gateway status shows Programmed: True

Day 2 operation on certificates

We had decided to move the certificates later, to narrow the scope of the migration and easily use the current certificates at the time. However, when they expire, we could be in trouble. Here is what you need to do make sure that your certificates are managed by Gateway API + cert-manager:

1. Make sure that cert-manager supports Gateway API:

You need to enable Gateway API support on cert-manager:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: cert-manager

namespace: argocd

spec:

project: default

source:

repoURL: https://charts.jetstack.io

targetRevision: v1.17.2

chart: cert-manager

helm:

values: |

config:

enableGatewayAPI: true ## Make sure this exists!

2. Update the ClusterIssuer:

Either update the current issuer or create a new one:

apiVersion: cert-manager.io/v1

kind: ClusterIssuer

metadata:

name: letsencrypt-prod

spec:

acme:

preferredChain: ""

privateKeySecretRef:

name: letsencrypt-prod

server: https://acme-v02.api.letsencrypt.org/directory

solvers:

- http01:

gatewayHTTPRoute:

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: shared-gateway ## this is the name of your gateway

namespace: envoy-gateway ## where your gateway resides

3. Annotate the Gateway for cert-manager

You need to add the annotation, just like we do for ingress-nginx:

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: shared-gateway

namespace: envoy-gateway

annotations:

# needs to match with the ClusterIssuer you created/updated on previous step

cert-manager.io/cluster-issuer: letsencrypt-prod

spec:

gatewayClassName: envoy

4. Separate the listeners

We initially had one listener for all our hosts, but they need to be separated (unless you use DNS solver for a wildcard certificate).

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: shared-gateway

namespace: envoy-gateway

annotations:

cert-manager.io/cluster-issuer: letsencrypt-prod

spec:

gatewayClassName: envoy

addresses:

- type: IPAddress

value: 146.235.214.235

listeners:

- name: https-guac

protocol: HTTPS

port: 443

hostname: guac.cncf.io

tls:

mode: Terminate

certificateRefs:

- name: guac-tls-gw

kind: Secret

group: ""

allowedRoutes:

namespaces:

from: All

# added for cert-manager HTTP01 solver

- name: http-guac

protocol: HTTP

port: 80

hostname: guac.cncf.io

allowedRoutes:

namespaces:

from: All

- name: http-api-guac

protocol: HTTP

port: 80

hostname: api.guac.cncf.io

allowedRoutes:

namespaces:

from: All

# added for cert-manager HTTP01 solver

- name: https-notes

protocol: HTTPS

port: 443

hostname: notes.cncf.io

tls:

mode: Terminate

certificateRefs:

- name: codimd-tls

kind: Secret

group: ""

allowedRoutes:

namespaces:

from: All

- name: http-notes

protocol: HTTP

port: 80

hostname: notes.cncf.io

allowedRoutes:

namespaces:

from: All

...

5. Remove redundant ReferenceGrants

Since the new certificates are created on the same namespace with the Envoy Gateway (shared-gateway in our case), we don’t need the ReferenceGrants anymore. We removed them:

kubectl delete referencegrant --all -AConclusion

The migration from ingress-nginx to Envoy Gateway required careful attention to:

- Certificate ownership and cross-namespace access

- Cloud load balancer integration (NodePort, health checks, externalTrafficPolicy)

- Backend TLS configuration for services requiring HTTPS upstream connections

The Gateway API’s multi-layer architecture provides better separation of concerns compared to ingress-nginx, though it requires understanding additional resources like ReferenceGrants and BackendTLSPolicy.

To sum it up, we can say that the cloud native world already provided alternatives before the sun setting of ingress nginx. We hope this small insight can help you in your journey of migrating away from ingress nginx.

The weight of AI models: Why infrastructure always arrives slowly

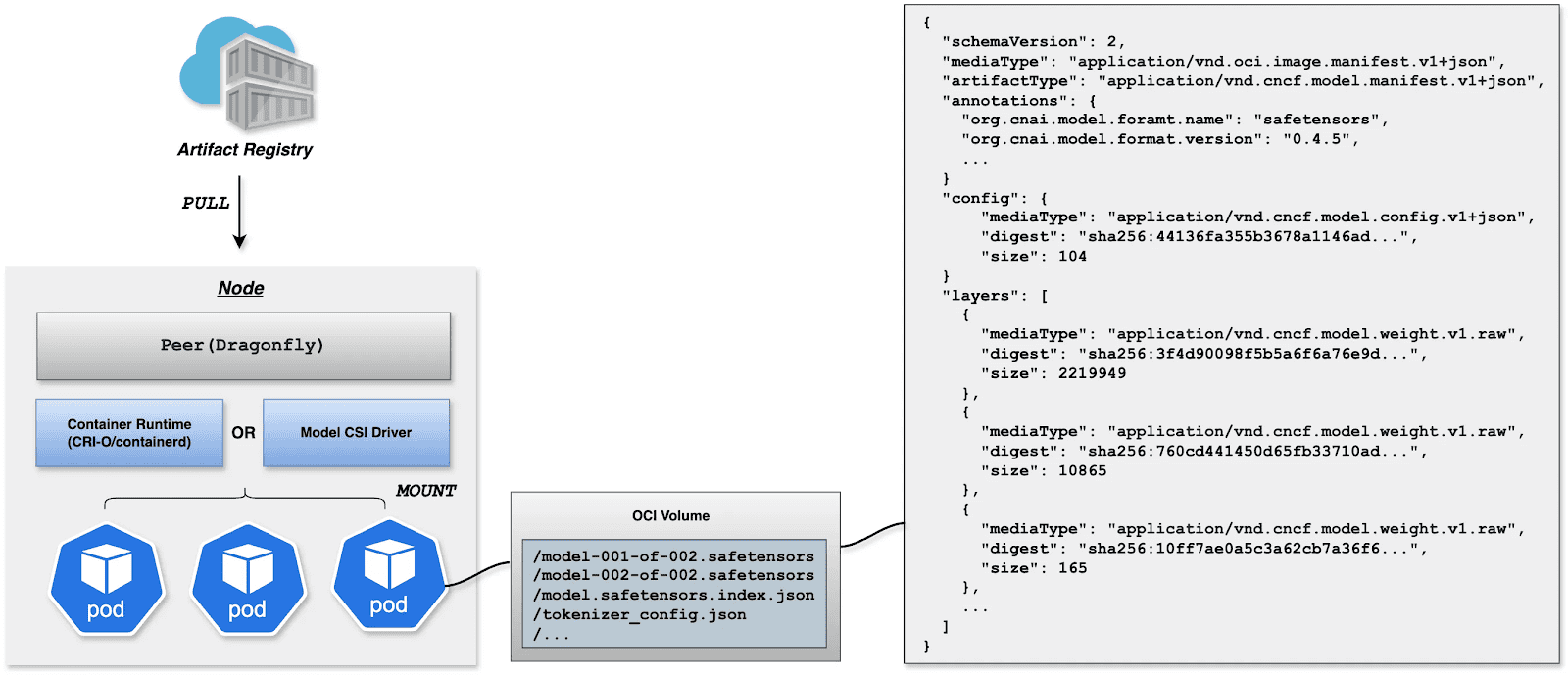

As AI adoption accelerates across industries, organizations face a critical bottleneck that is often overlooked until it becomes a serious obstacle: reliably managing and distributing large model weight files at scale. A model’s weights serve as the central artifact that bridges both training and inference pipelines — yet the infrastructure surrounding this artifact is frequently an afterthought.

This article addresses the operational challenges of managing AI model artifacts at enterprise scale, and introduces a cloud-native solution that brings software delivery best practices – versioning, immutability, and GitOps, to the world of large model files.

The gap nobody talks about — until it breaks production

The cloud native gap: Most existing ML model storage approaches were not designed with Kubernetes-native delivery in mind, leaving a critical gap between how software artifacts are managed and how model artifacts are managed. Within the CNCF ecosystem, projects such as ModelPack, ORAS, Harbor, and Dragonfly are exploring complementary approaches to managing and distributing large artifacts.

Today, enterprises operate AI infrastructure on Kubernetes yet their model artifact management lags behind. Software containers are pulled from OCI registries with full versioning, security scanning, and rollback support. Model weights, by contrast, are often downloaded via ad hoc scripts, copied manually between storage buckets, or distributed through unsecured shared filesystems. This gap creates deployment fragility, security risks, and operational overhead at scale.

When your model weighs more than your entire app

Modern foundation models are not small. A single model checkpoint can range from tens of gigabytes to several terabytes. For reference, a quantized LLaMA-3 70B model weighs approximately 140 GB, while frontier multimodal models can easily exceed 1 TB. These are not files you version-control with standard Git — they demand dedicated storage strategies, efficient transfer protocols, and careful access control.

The core challenges are: storage at scale, distribution speed, and reproducibility. Teams need to store multiple model versions, rapidly distribute them to GPU inference nodes across regions, and guarantee that any deployment can be traced back to an exact, immutable artifact.

Three paths forward — and why none of them are enough

Git LFS (Hugging Face Hub)Object Storage (S3, MinIO)Distributed Filesystem (NFS, CephFS)ProsNative version control (branches, tags, commits, history).Standard offering from cloud providers. Native support in engines like vLLM/SGLang.POSIX compatible. Low integration cost.ConsPoor protocol adaptation for cloud-native environments. Inherits Git’s transport inefficiencies, lacks optimizations for huge file distribution.Lacks structured metadata. Weak version management capabilities.Lacks structured metadata. Weak version management capabilities. High operational complexity for distributed filesystems. Rethinking the delivery pipeline: Models deserve better than a shell scriptThe approach described here treats AI model weights as first-class OCI (Open Container Initiative) artifacts, packaging them in the same container registries used for application images. This enables model delivery to leverage the full ecosystem of container tooling: security scanning, signed provenance, GitOps-driven deployment, and Kubernetes-native pulling.

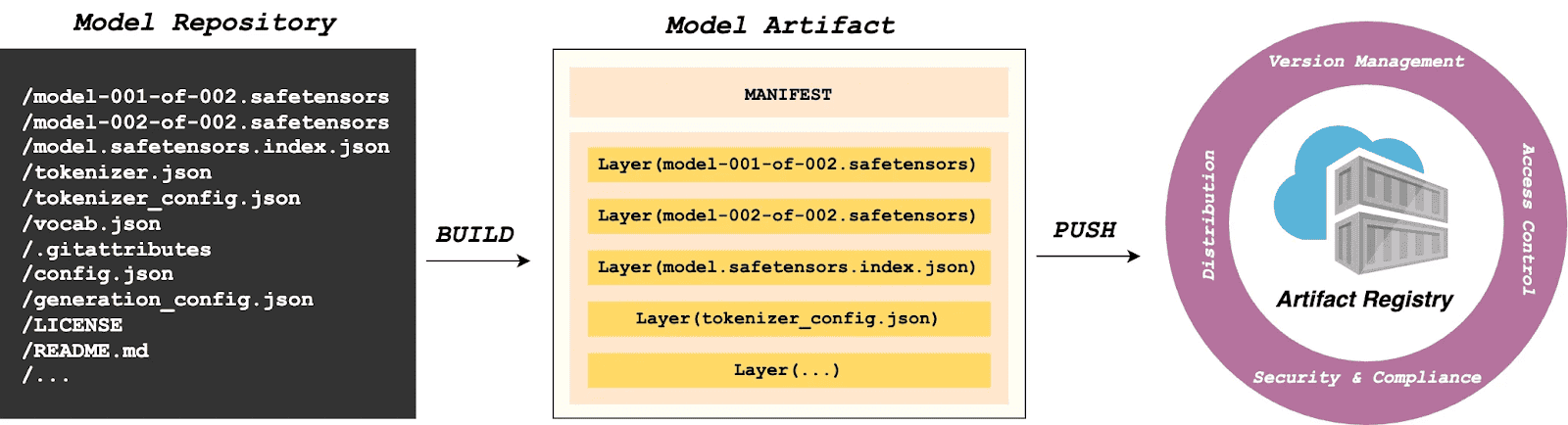

What If we shipped models the same way we ship code?

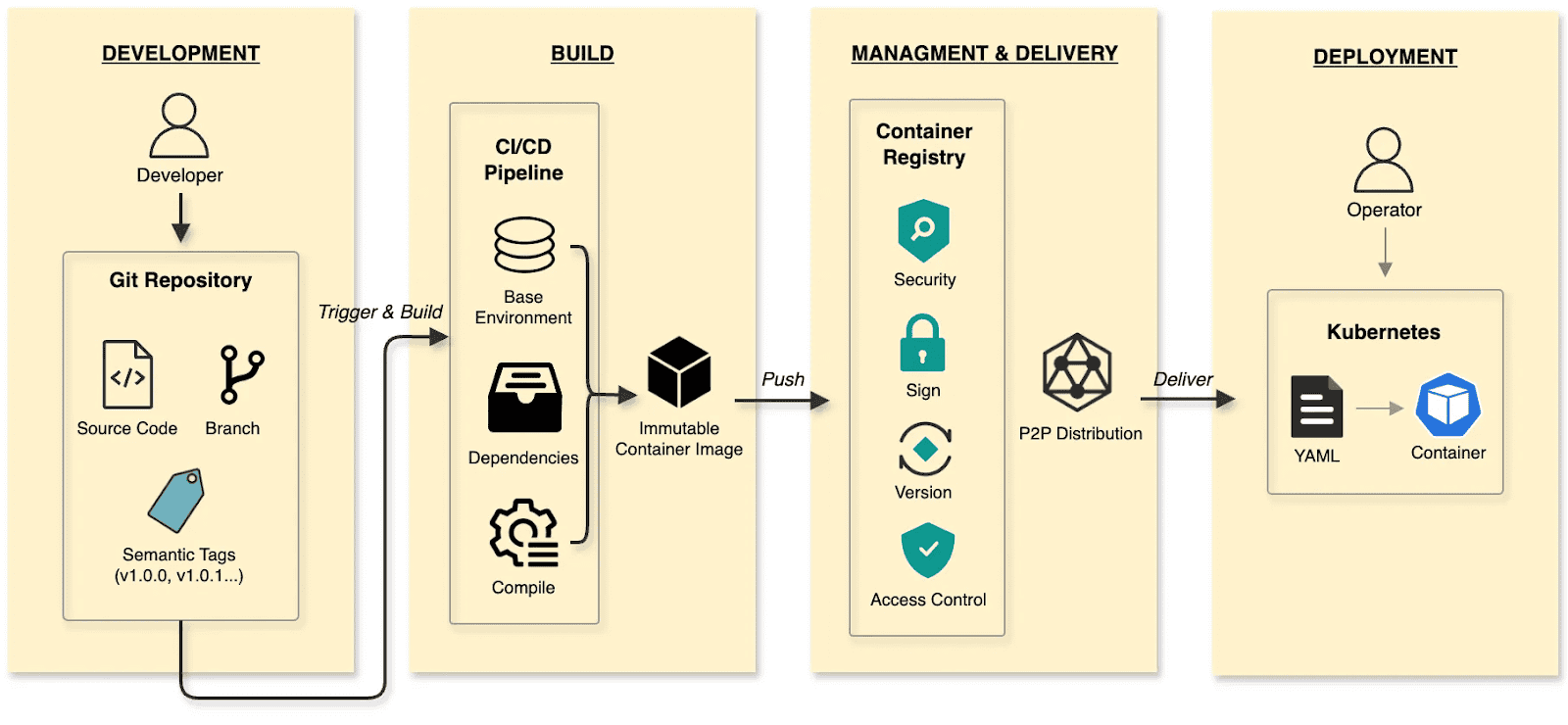

In the cloud-native era, developers have long established a mature and efficient paradigm for software delivery.

The software delivery:

- Develop: Developers commit code to a Git repository, manage code changes through branches, and define versions using tags at key milestones.

- Build: CI/CD pipelines compile and test, packaging the output into an immutable Container Image.

- Manage and deliver: Images are stored in a Container Registry. Supply chain security (scanning/signing), RBAC, and P2P distribution ensure safe delivery.

- Deploy: DevOps engineers use declarative Kubernetes YAML to define the desired state. The Container’s lifecycle is managed by Kubernetes.

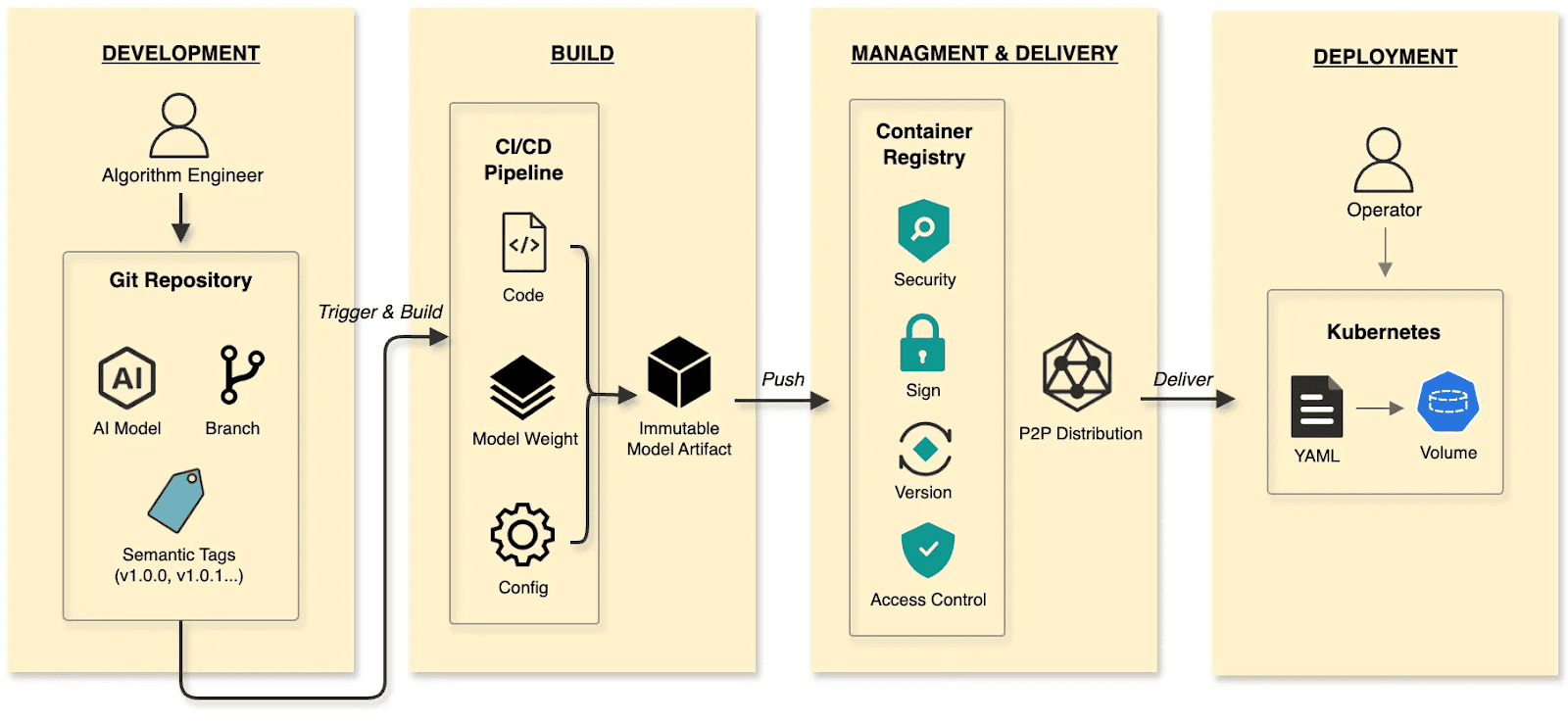

The cloud native AI model delivery:

- Develop: Algorithm engineers push weights and configs to the Hugging Face Hub, treating it as the Git Repository.

- Build: CI/CD pipelines package weights, runtime configurations, and metadata into an immutable Model Artifact.

- Manage and deliver: The Model Artifact is managed by an Artifact Registry, reusing the existing container infrastructure and toolchain.

- Deploy: Engineers use Kubernetes OCI Volumes or a Model CSI Driver. Models are mounted into the inference Container as Volumes via declarative semantics, decoupling the AI model from the inference engine (vLLM, SGLang, etc.).

By applying software delivery paradigms and supply chain thinking to model lifecycle management, we constructed a granular, efficient system that resolves the challenges of managing and distributing AI models in production.

Walking the pipeline: A build story in four steps

Build

modctl is a CLI tool designed to package AI models into OCI artifacts. It standardizes versioning, storage, distribution and deployment, ensuring integration with the cloud-native ecosystem.

Step 1: Auto-generate Modelfile

Run the following in the model directory to generate a definition file.

$ modctl modelfile generate .Step 2: Customize Modelfile

You can also customize the content of the Modelfile.

# Model name (string), such as llama3-8b-instruct, gpt2-xl, qwen2-vl-72b-instruct, etc.

NAME qwen2.5-0.5b

# Model architecture (string), such as transformer, cnn, rnn, etc.

ARCH transformer

# Model family (string), such as llama3, gpt2, qwen2, etc.

FAMILY qwen2

# Model format (string), such as onnx, tensorflow, pytorch, etc.

FORMAT safetensors

# Specify model configuration file, support glob path pattern.

CONFIG config.json

# Specify model configuration file, support glob path pattern.

CONFIG generation_config.json

# Model weight, support glob path pattern.

MODEL *.safetensors

# Specify code, support glob path pattern.

CODE *.pyStep 3: Login to Artifact Registry (Harbor)

$ modctl login -u username -p password harbor.registry.comStep 4: Build OCI Artifact

$ modctl build -t harbor.registry.com/models/qwen2.5-0.5b:v1 -f Modelfile .A Model Manifest is generated after the build. Descriptive information such as ARCH, FAMILY, and FORMAT is stored in a file with the media type application/vnd.cncf.model.config.v1+json.

{

"schemaVersion": 2,

"mediaType": "application/vnd.oci.image.manifest.v1+json",

"artifactType": "application/vnd.cncf.model.manifest.v1+json",

"config": {

"mediaType": "application/vnd.cncf.model.config.v1+json",

"digest": "sha256:d5815835051dd97d800a03f641ed8162877920e734d3d705b698912602b8c763",

"size": 301

},

"layers": [

{

"mediaType": "application/vnd.cncf.model.weight.v1.raw",

"digest": "sha256:3f907c1a03bf20f20355fe449e18ff3f9de2e49570ffb536f1a32f20c7179808",

"size": 4294967296

},

{

"mediaType": "application/vnd.cncf.model.weight.v1.raw",

"digest": "sha256:6d923539c5c208de77146335584252c0b1b81e35c122dd696fe6e04ed03d7411",

"size": 5018536960

},

{

"mediaType": "application/vnd.cncf.model.weight.config.v1.raw",

"digest": "sha256:a5378e569c625f7643952fcab30c74f2a84ece52335c292e630f740ac4694146",

"size": 106

},

{

"mediaType": "application/vnd.cncf.model.weight.code.v1.raw",

"digest": "sha256:15da0921e8d8f25871e95b8b1fac958fc9caf453bad6f48c881b3d76785b9f9d",

"size": 394

},

{

"mediaType": "application/vnd.cncf.model.doc.v1.raw",

"digest": "sha256:5e236ec37438b02c01c83d134203a646cb354766ac294e533a308dd8caa3a11e",

"size": 23040

}

]

}Step 5: Push

$ modctl push harbor.registry.com/models/qwen2.5-0.5b:v1Management

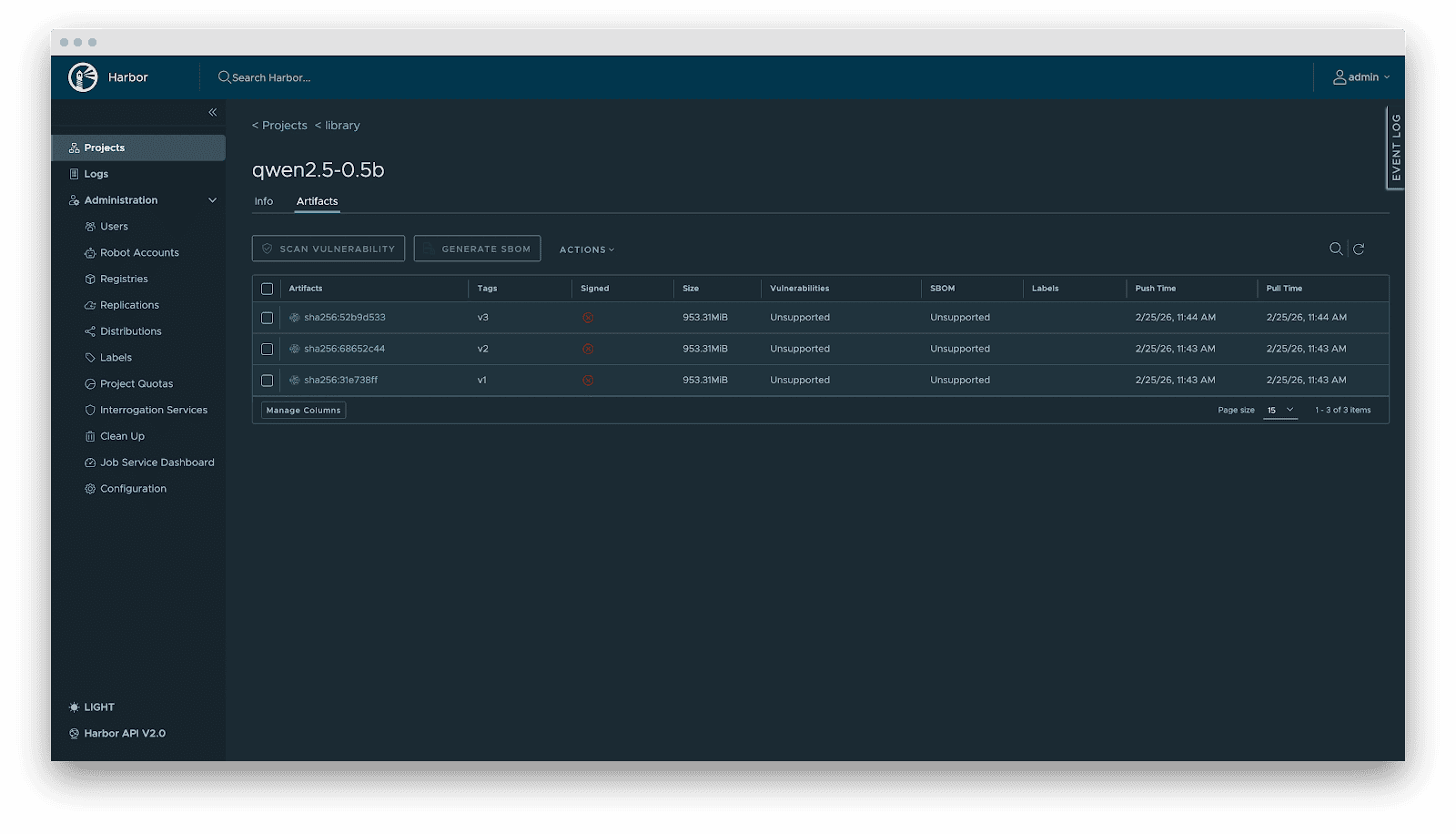

Current AI infrastructure workflows focus heavily on model distribution performance, often ignoring model management standards. Manual copying works for experiments, but in large-scale production, lacking unified versioning, metadata specs, and lifecycle management is poor practice. As the standard cloud-native Artifact Registry, Harbor is ideally suited for model storage, treating models as inference artifacts.

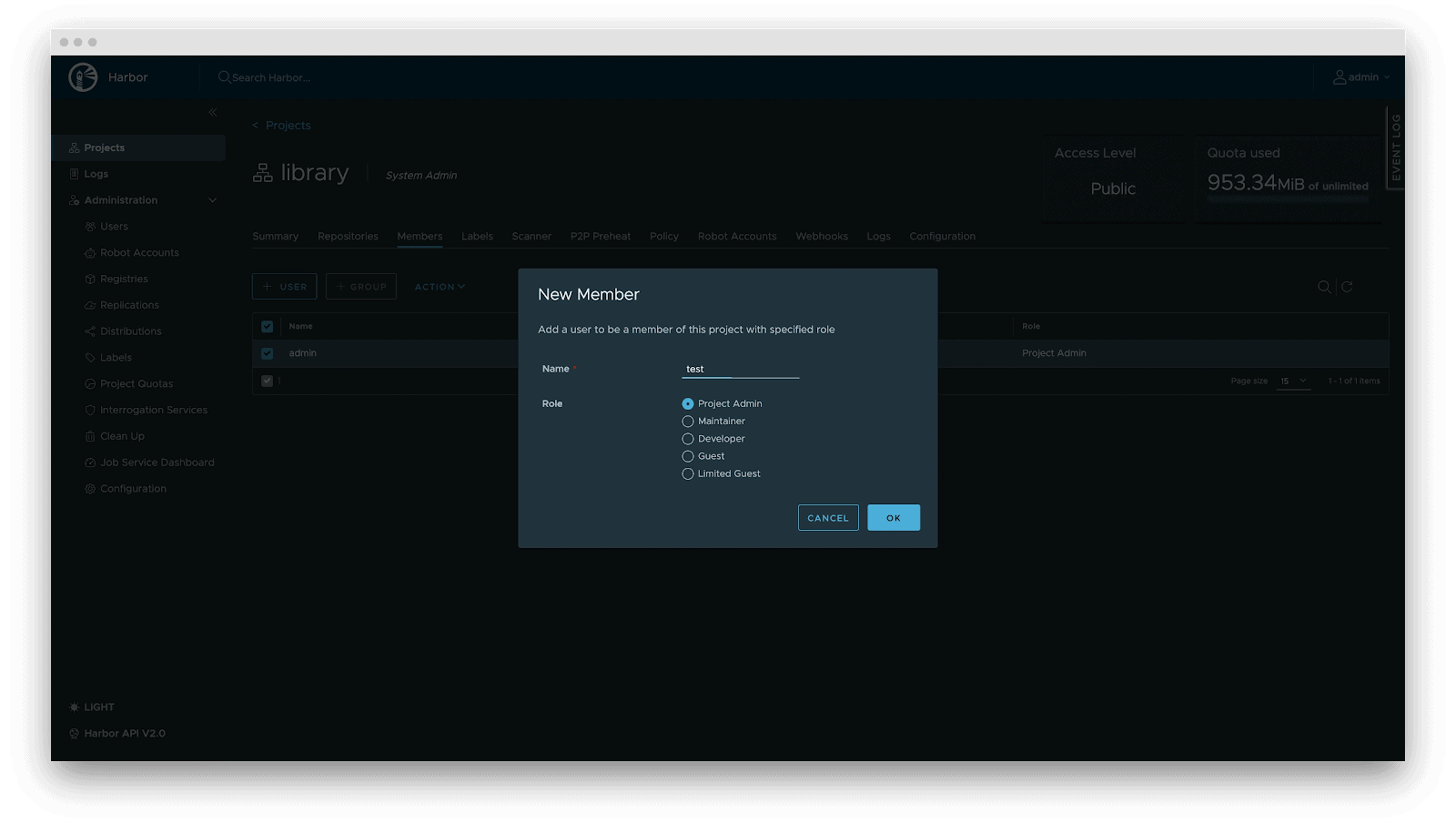

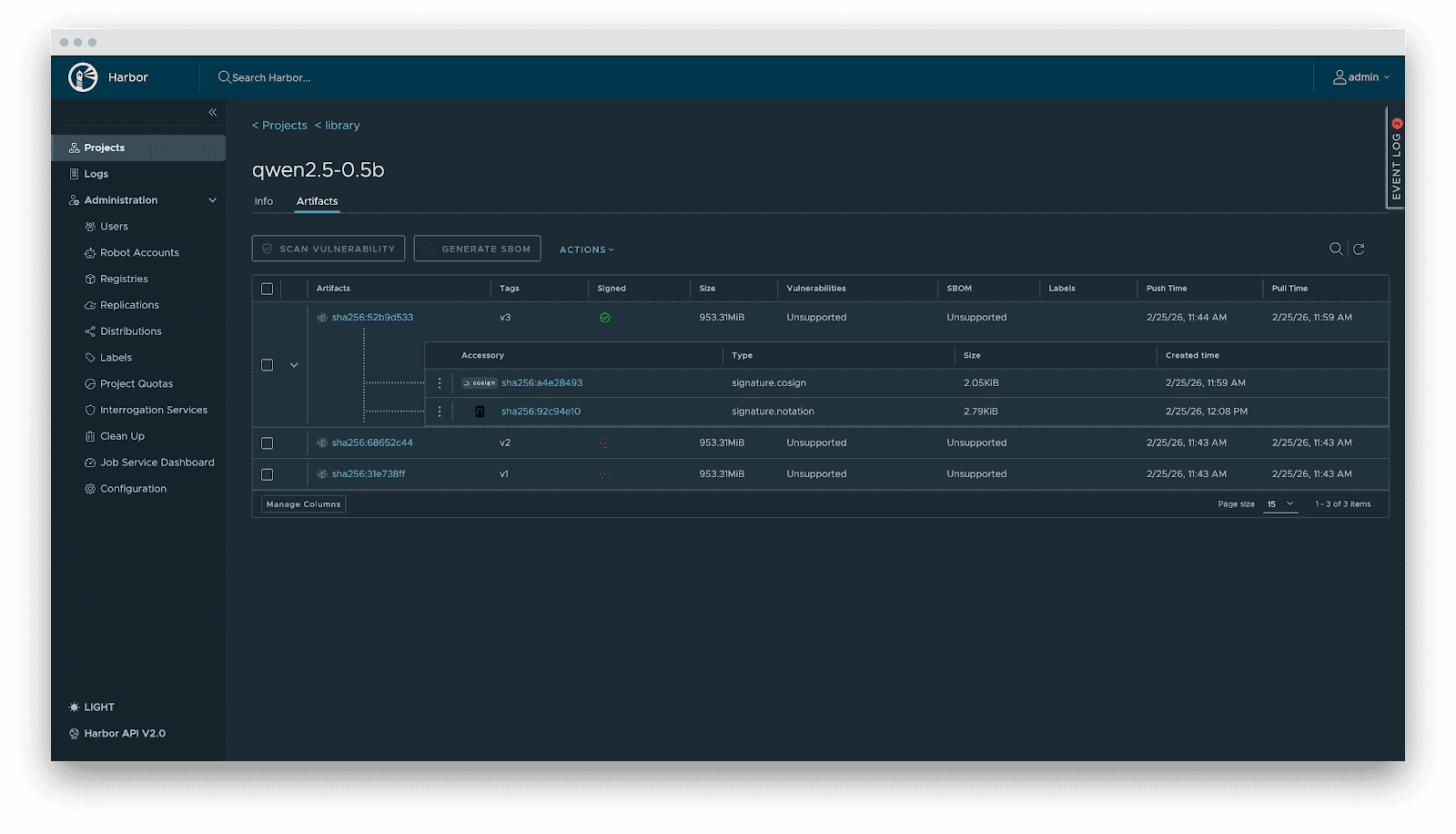

Harbor standardizes AI model management through:

- Versioning: Models are OCI Artifacts with immutable Tags and Sha256 Digests. This guarantees deterministic inference environments. Meanwhile, it visually presents the model’s basic attributes, parameter configurations, display information, and the file list, which not only reduces the risks of unknown versions but also achieves full transparency of the model.

- RBAC: Fine-grained access control. Control who can PUSH (e.g., Algorithm Engineers), who can only PULL (e.g., Inference Services), and who has administrative privileges.

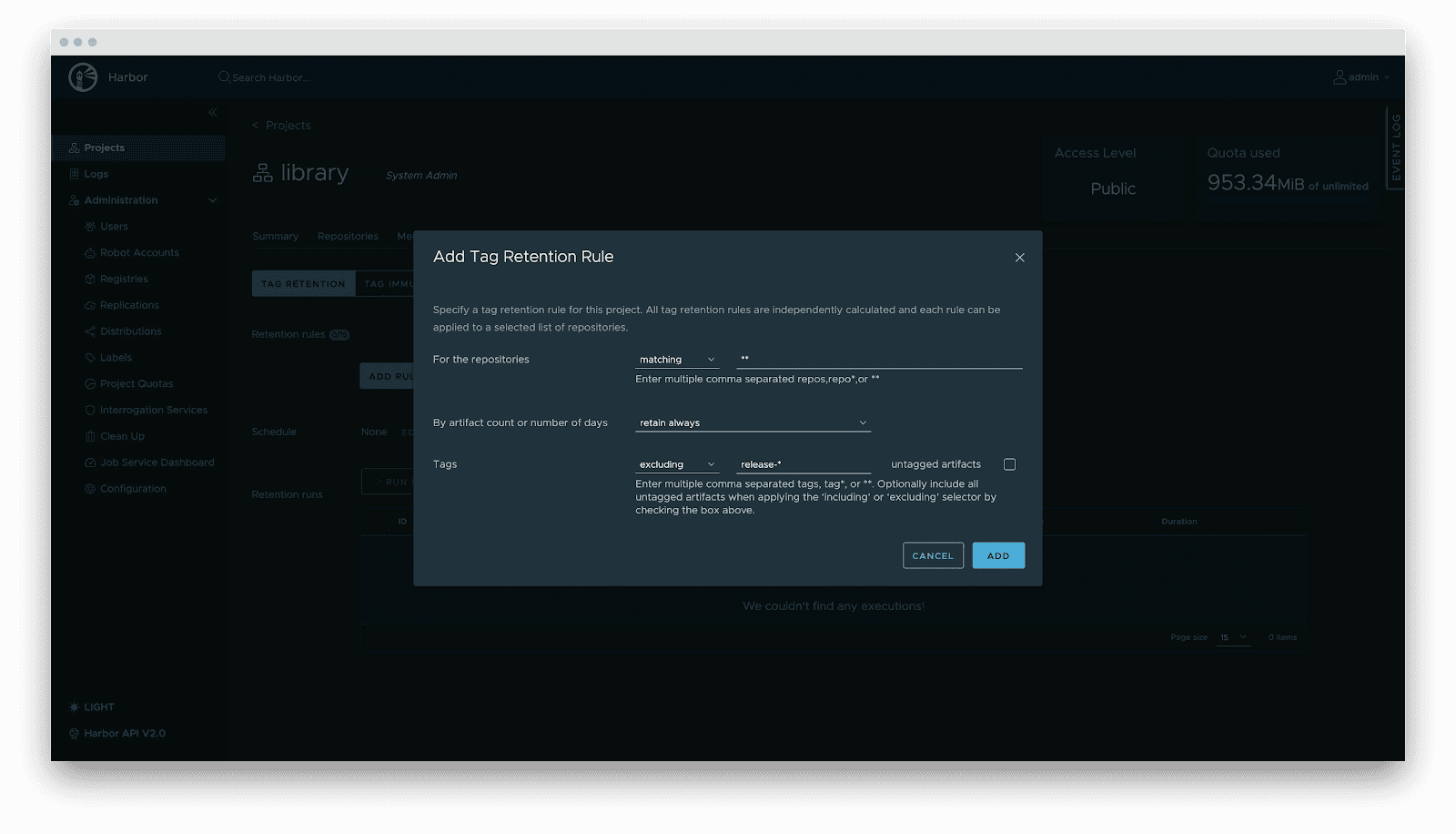

- Lifecycle management: Tag retention policies automatically purge non-release versions while locking active versions, balancing storage costs with stability.

- Supply chain security: Integration with Cosign/Notation for signing. Harbor enforces signature verification before distribution, preventing model poisoning attacks.

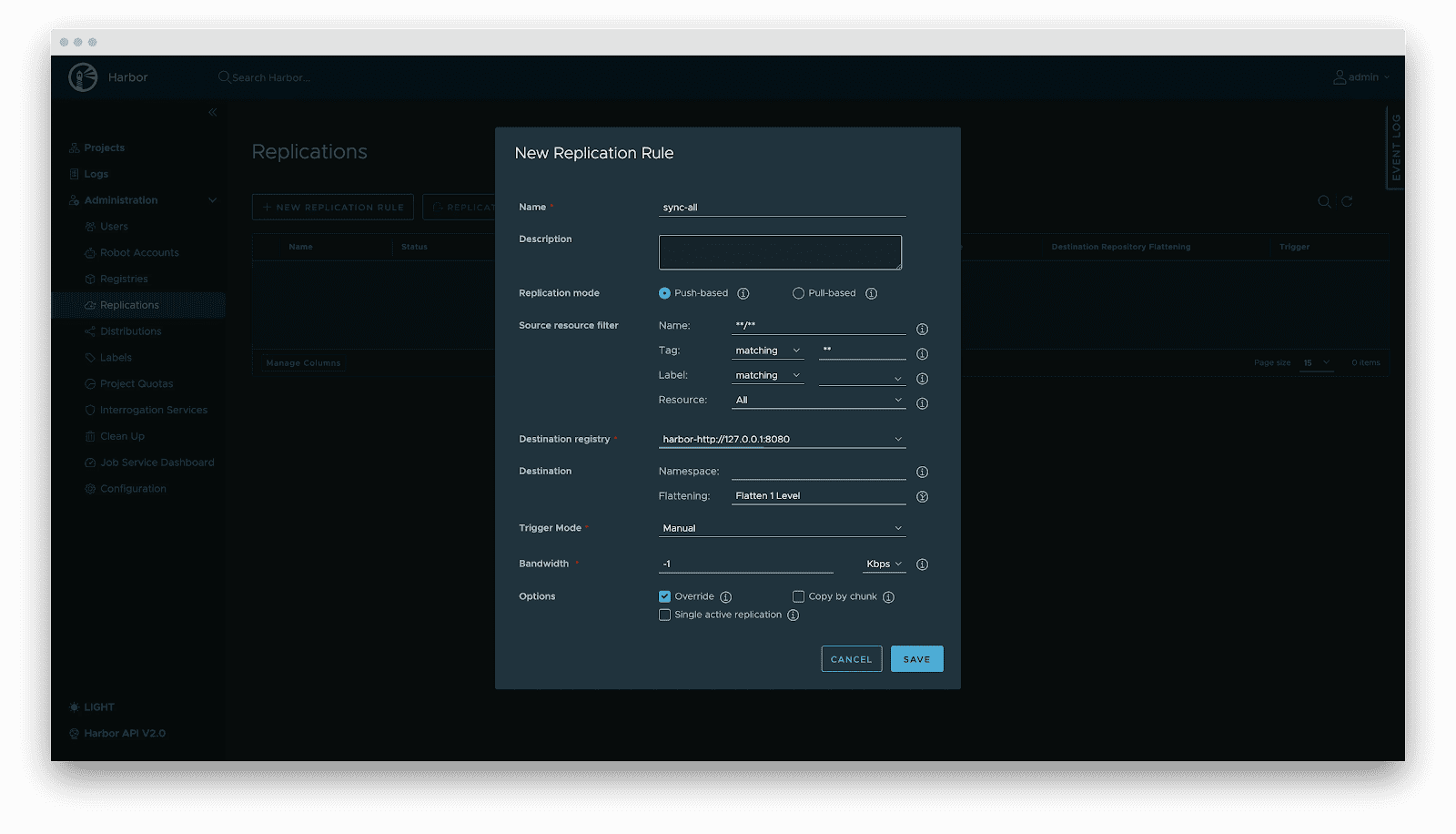

- Replication: Automated, incremental synchronization between central and edge registries or active-standby clusters.

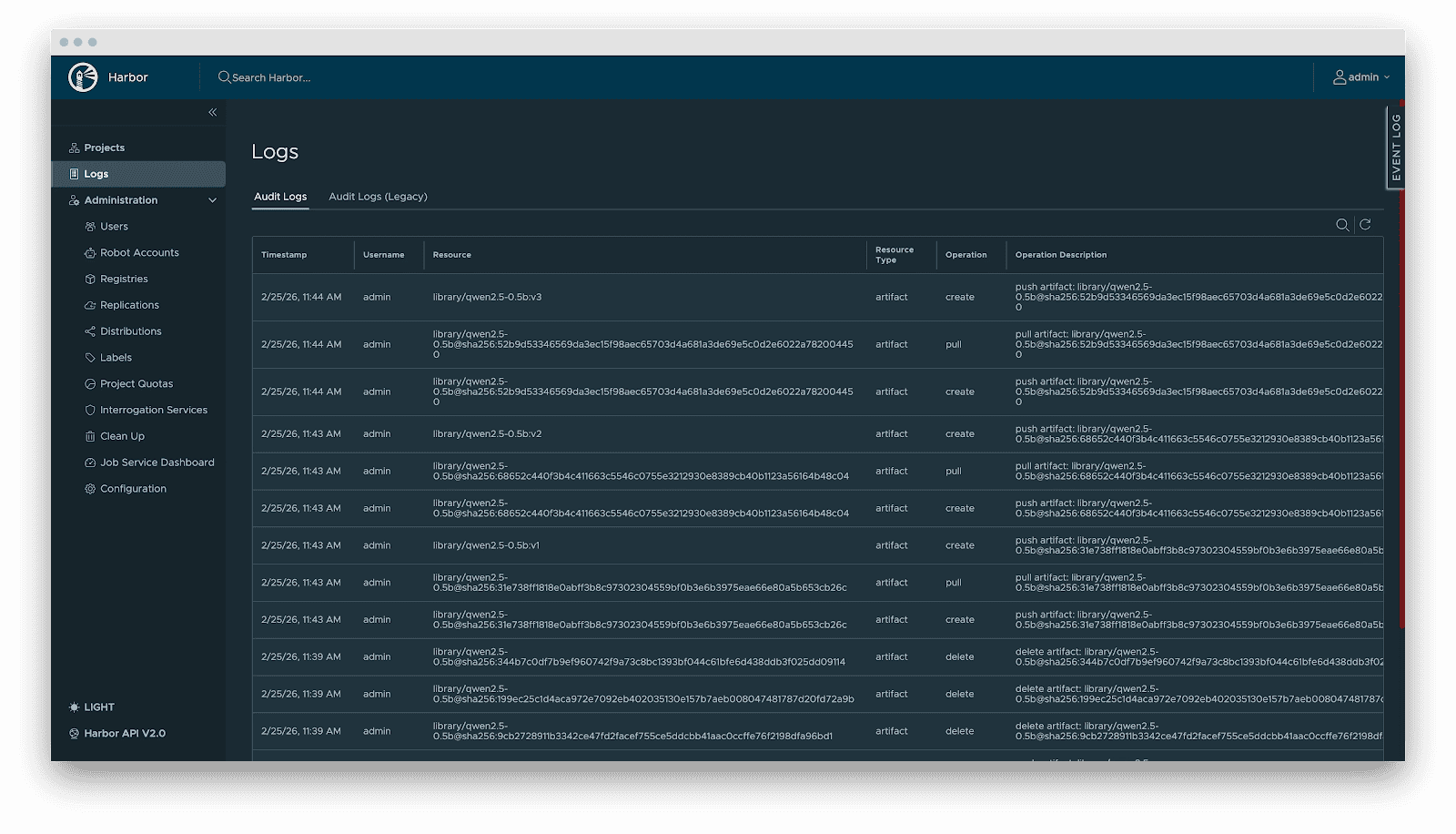

- Audit: Comprehensive logging of all artifact operations (pull/push/delete) for security compliance and traceability.

Delivery

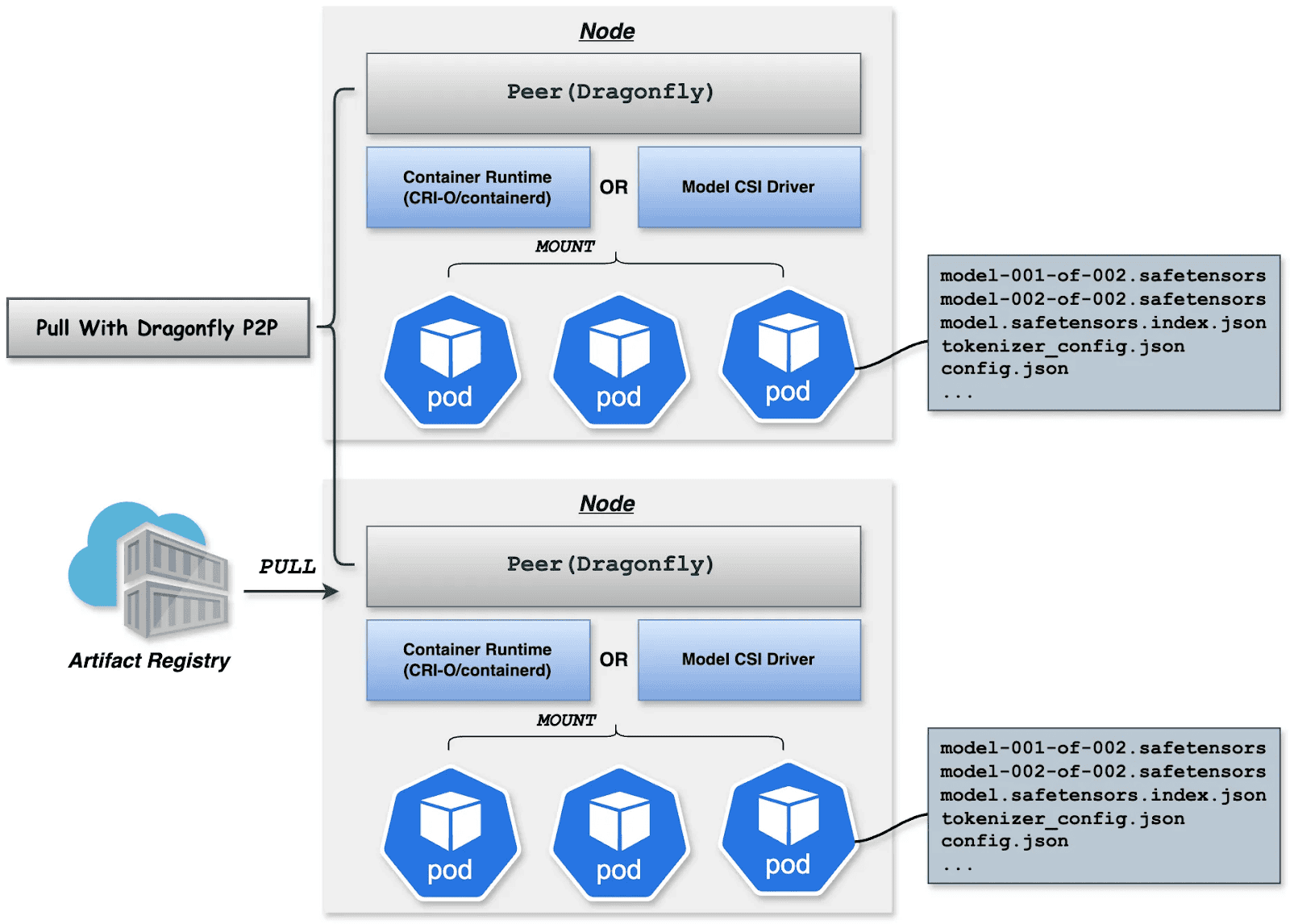

Downloading terabyte-sized model weights directly from the origin introduces bandwidth bottlenecks. We utilize Dragonfly for P2P-based distribution, integrated with Harbor for preheating.

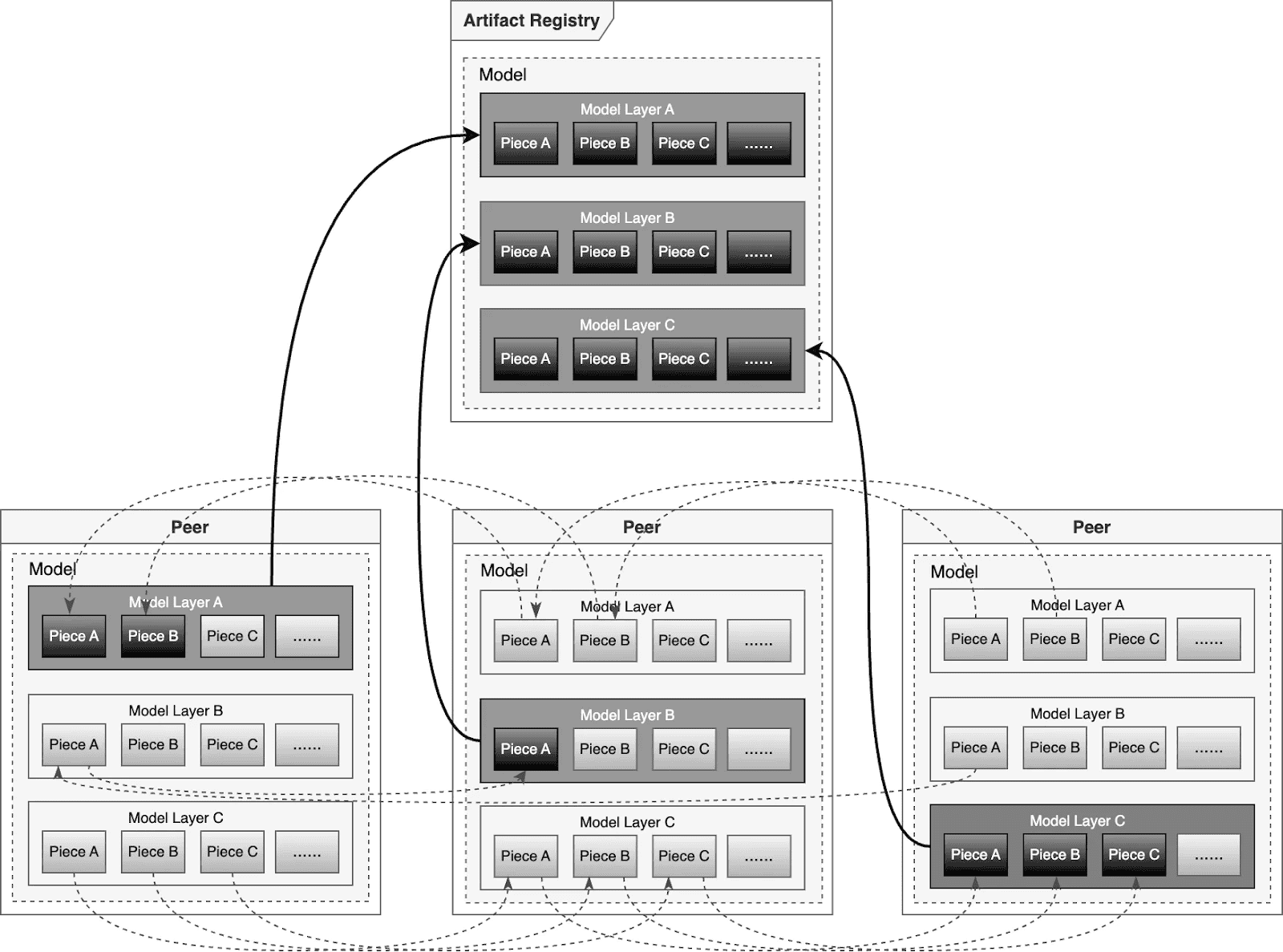

Dragonfly P2P-based distribution

For large-scale distribution scenarios, Dragonfly has been deeply optimized based on P2P technology. Taking the example of 500 nodes downloading a 1TB model, the system distributes the initial download tasks of different layers across nodes to maximize downstream bandwidth utilization and avoid single-point congestion. Combined with a secondary bandwidth-aware scheduling algorithm, it dynamically adjusts download paths to eliminate network hotspots and long-tail latency. For individual model weight, Dragonfly splits individual model weights into pieces and fetches them concurrently from the origin. This enables streaming-based downloading, allowing users to share models without waiting for the complete file. This solution has been proven in high-performance AI clusters, utilizing 70%–80% of each node’s bandwidth and improving model deployment efficiency.

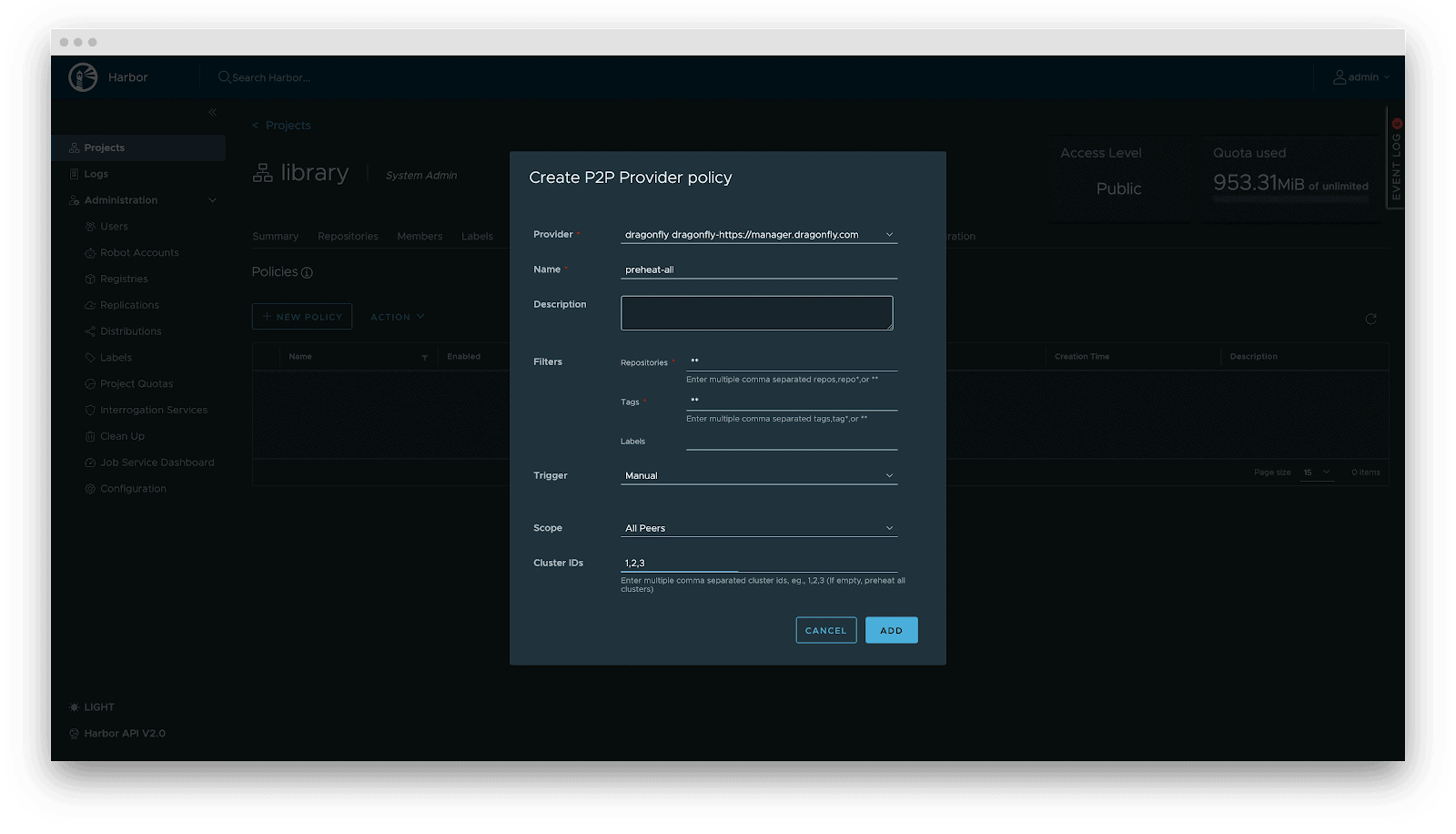

Preheating

For latency-sensitive inference services, Harbor triggers Dragonfly to distribute and cache data on target nodes before service scaling. When the instance starts, the model loads from the local disk, achieving zero network latency.

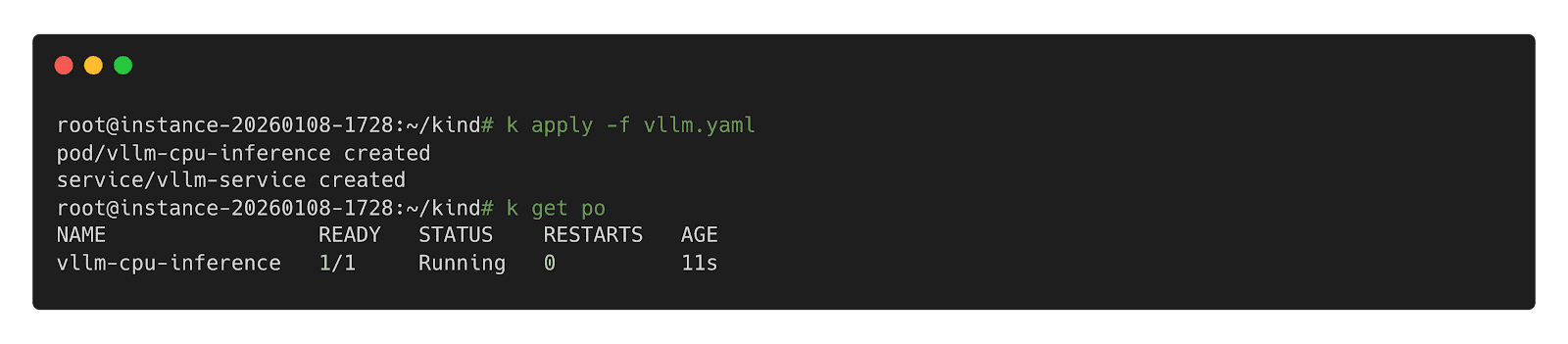

Deployment

Deployment focuses on decoupling the Model (Data) from the Inference Engine (Compute). By leveraging Kubernetes declarative primitives, the Engine runs as a Container, while the Model is mounted as a Volume. This native approach not only enables multiple Pods on the same node to share and reuse the model, saving disk space, but also leverages the preheating and P2P capabilities of Harbor & Dragonfly to eliminate the latency of pulling large model weights, significantly improving startup speed.

OCI Volumes (Kubernetes 1.31+)